First iteration done as in dinner

Well, 30 days later and our first sprint is done. We finished ahead of schedule at about 2pm the day before the sprint “officially” ended with one task remaining that the QA guy had to do. It was a fun ride and challenging. The entire team (sans me) had to deal with the following new technologies:

- Smart Client Software Factory

- Composite Application UI Block

- Enterprise Libraries

- Model-View-Presenter

- .NET 2.0 and Generics, Delegates, etc.

- ClickOnce

- Unit Tests

- TDD

- Visual Studio 2005

- Team System

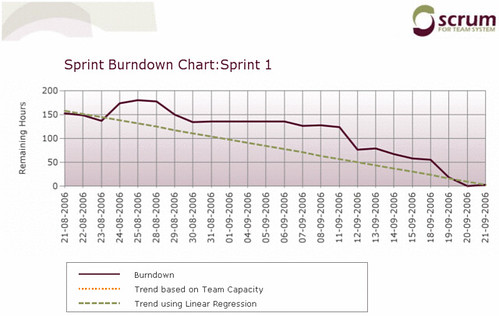

As well as adapt to the Scrum process as they were mostly used to waterfall-esqe approaches (BDUF, highly detailed specs, etc.). I think everyone adapted pretty well. We had some churn the first couple of weeks as the plumbing got settled, we got used to using Team System (as a team) without clobbering each others work. Here’s the burndown from the iteration:

Things were a little goofy. First off I entered all the features into the Product Backlog, but then another guy (while I was enjoying the backwoods of B.C.) entered the same set and deleted my initial ones. Then we forgot to enter the initial estimates for the sprint. Finally we had entered the estimates but then had entered them in hours and Scrum for Team System uses days for the product backlog items. So that explains the false start (I’m still trying to manipulate the original warehouse database to correct that).

The other three gaps were caused by over estimation. On the 28th of August, we introduced a data layer using NetTiers and a few simple tables. That knocked two tasks that were pegged at 14 hours each down to nothing in a day. On the 11th of September we did the same thing for the next set of data we needed to store. Finally on the 18th of September we realized there were 3 or 4 tasks that were estimated at about 8–10 hours but they were really just rolled up in another screen, so that was knocked off in a day.

Not the prettiest sprint burndown chart out there, but it’s real numbers.

As for our Sprint Review, that was almost a huge guffaw. Our review with the customers was yesterday at 10am. We had stopped development and put out a release for final QA testing by noon the day before. Everything worked fine. The QA guy and some devs were working until about 9pm that night either just admiring what we did or were adding little things we wanted to put into the system (but we weren’t going to show this) and all was well. Then at 7:30 the next morning, our QA came in and couldn’t run the app. By the time 9am rolled around and we were checking the release to go over the demo, nothing worked. We tried on every machine and still no dice.

At 9:30 we reset the database and I thought we had done something horrible because the app wouldn’t even launch. Man, we were sweating bullets. Finally we determined that something happened with the proxy server on the network, so launching our ClickOnce app was failing and the exception handler couldn’t deal with it (for whatever reason). The network guys push stuff out all the time but apps for this customer never were this complicated, so nothing ever was affected. Anyways, to get the demo up and running we commented out some handling and it ran fine.

As we were really cramped for time and didn’t have time to start up the laptop to demo the system, I didn’t want to chance having that fail so we grabbed the one dev’s desktop (a Dell Dimension E521), his keyboard and mouse and screen (luckily it was a LCD and not a CRT) and hauled ass across the street to the building where we did the review.

At the end of the day, the customer was happy with what we did. There were a few suggestions and ideas that we’ll add to the next sprint. We spent an hour and a half going over the backlog items for the next sprint and ended up with a nice list to deliver the first release at the end of October. That burndown will be more of a slope than a bumpy ride, but the team had fun and we delivered working software which was the key thing.

One more note. We couldn’t figure out why when we were going through the product that a list of values were not coming back from a corporate web service. A different list we used was being populated from that same service, but the one list of numbers we wanted wasn’t. Later that day I found out that one of the devs in the other building had deleted that web service method because he thought it was a duplicate. Luckily, our system was built using Smart Web References (part of the Smart Client Software Factory) and will simply return us the list of values and a success/failure value of the web service call. This isn’t exposed to the user, but at least the app didn’t hit and unhandled exception during the demo. Thanks Les!