Archives

-

Speaking on SubSonic at the San Diego Code Camp this Saturday

I'll be presenting a session at the San Diego Code Camp this Saturday (6/30/07) titled "Using SubSonic to built ASP.NET applications that are good, fast, and cheap". I'll do a quick overview SubSonic in general, but spend most of the time building out a website. If you're interested in following along on you laptop, be sure to grab of SubSonic 2.0.2 (the latest release)from CodePlex.

-

The value of "good enough" technology

Twitter drives all my tech-savvy friends crazy. We all agree that the idea - a simple mix of blog, chat, and IM - is a good one. However the site does very little, and what it does it does poorly - slow response, frequent outages, etc. Most developers figure they could write a "better Twitter" in a lazy afternoon, and some already have. Good idea, poor execution, and yet... it's good enough.

Twitter drives all my tech-savvy friends crazy. We all agree that the idea - a simple mix of blog, chat, and IM - is a good one. However the site does very little, and what it does it does poorly - slow response, frequent outages, etc. Most developers figure they could write a "better Twitter" in a lazy afternoon, and some already have. Good idea, poor execution, and yet... it's good enough. -

Silverlight content only prints in IE (for now)

Last night I made a simple Silverlight maze generator for my 6 year old daughter, who's really into mazes right now. When I tried to print the resulting mazes, I found that the Silverlight content was was blank in Firefox (left), but worked in IE (right):

-

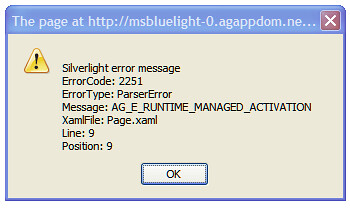

[Silverlight] "AG_E_RUNTIME_MANAGED_ACTIVATION" = You don't have Silverlight 1.1 installed

I'm thinking a better error message might be in order when folks try to view Silverlight 1.1 content with managed code and only have Silverlight 1.0 installed, but for now this is what you get (obviously the last three lines will vary depending on the actual XAML content):

I'm thinking a better error message might be in order when folks try to view Silverlight 1.1 content with managed code and only have Silverlight 1.0 installed, but for now this is what you get (obviously the last three lines will vary depending on the actual XAML content): -

[SQL Server Analysis Services] - "Errors in the metadata manager" when restoring a backup

I had trouble restoring a SQL Server 2005 Analysis Services backup today due to "Errors in the metadata manager" messages:

The ddl2:MemberKeysUnique element at line 243, column 28420 (namespace http://schemas.microsoft.com/analysisservices/2003/engine/2) cannot appear under Load/ObjectDefinition/Dimension/Hierarchies/Hierarchy.

Errors in the metadata manager. An error occurred when instantiating a metadata object from the file, '\\?\C:\Program Files\Microsoft SQL Server\MSSQL.2\OLAP\Data\... -

Silverlight Maze

This requires Silverlight 1.1 Alpha, available here. If you only have Silverlight 1.0 installed, you'll get a wacky error message (AG_E_RUNTIME_MANAGED_ACTIVATION).

-

[SubSonic] LoadFromPost method maps controls to object properties

Since SubSonic data access code and cuts way down on the repetitive grunt work, I've started to resent having to write any code at all. On a recent project, we found that since we weren't writing much data access or map related objects, the majority of the code we had to write revolved around shuttling data between controls and object properties.

-

Calling an ASMX webservice from Silverlight? Use a static port.

Rob Conery recently posted on Creating a Web Service-Enabled Login Silverlight Control, which is probably a more important topic than many people realize right now. Since Silverlight code runs client side in the user's browser, many tasks like database access and user authentication require what is by definition a "web service" (even if it uses REST or some other, non-ASMX approach).

Along the way, Rob ran into an interesting issue. Being the wise man that he is, Rob knew that he faced a choice:

- Figure out an odd brain teaser dealing with undocumented alpha technologies

- Mention the odd brain teaser to Jon, who would likely get hooked and stay up all night figuring it out

Rob's a smart guy, you guess what he chose...

-

Safari on Windows - Browser testing just got a whole lot easier...

Funny, just last week I posted about using browsershots.org to see screenshots of your web application in a huge variety of browsers. Today, Apple announced Safari 3 runs on Windows.

Funny, just last week I posted about using browsershots.org to see screenshots of your web application in a huge variety of browsers. Today, Apple announced Safari 3 runs on Windows. -

Failed Orcas Beta 1 install - Check for Office 2007 Beta Installer records

The Orcas Beta 1 install kept failing on my laptop with a non-specific error. The install log didn't say anything very helpful:

Microsoft Web Designer Tools: [2] Component Microsoft Web Designer Tools returned an unexpected value.

setup.exe: [2] ISetupComponent::Pre/Post/Install() failed in ISetupManager::InternalInstallManager() with HRESULT -2147023293.

VS70pgui: [2] DepCheck indicates Microsoft Web Designer Tools is not installed. -

browsershots.org - Test your site in a variety of browsers on Win, Mac, and Linux

A client needed some help with a display issue on Safari / Mac. Browsercam is a good solution and is reasonably priced, but for this simple issue I just needed to see the site in Safari / Mac and make sure I hadn't affected IE6 as well.

-

Silverlight and XAML, have you guys met Old Man SVG?

XAML uses a vector graphics markup which is very similar to SVG, but since it's part of a complete application framework the designers decided to use a slightly different format. I'd expect that XAML would be able to load SVG, or that there would at least be plenty of tools to convert SVG to XAML, but it was tougher than I thought. After some background, I'll discuss some solutions I found.

-

VB.NET vs. C#, round 3?

VB.NET gets a hard time from C# developers. For a variety of reasons, the leading .NET programmers seem to be working in C#, and VB.NET developers get really tired of saying, "Hey, VB.NET can do that, too!"

-

Google Street View Maps - Not at all new, still great fun.

My friend wrote me last week to tell me about the astounding new feature Google Maps had added - Street View!

-

YOD'M 3D - Multiple Desktops for Windows

One of the coolest things I saw at MIX07 was on a Linux computer. Miguel was showing us something on his laptop when all of a sudden the desktop turned into a cube and started spinning around. Miguel told us it was Compiz, which has been available for X Window System (GNOME, KDE) for over a year now. Sidenote: A lot of people know it by the name of a fork of Compiz named Beryl. It sounds like Beryl is basically Compiz with a neater name and a better website. Anyhow, Beryl has rejoined the Compiz trunk.