We should be virtualizing Applications, not Machines

One of the benefits of my new job at Vertigo Software is that I have more frequent opportunities to talk with my co-worker, Jeff Atwood. If everything goes right, we argue... because if we agree, neither of us is going to learn anything. Recently, we argued about virtual machines. I think machine virtualization is hugely oversold. We let the technical elegance (gee whiz, a program that lets me pretend to run another computer as another program!) distract us from the fact that virtual machines are a sleazy, inelegant hack.

I was a teenage VM junkie

I'm saying this as someone who used to be a big VM advocate. A few years ago, I noticed that it took new developers as much as a week to get set up to develop on some of the company's more complex systems, so I worked with application leads to set up Virtual PC images which had everything pre-installed. Sure enough, I had new developers working against complex DCOM / .NET / DB2 / "Classic" ASP environments with tons of dependencies in the time it took to copy the VPC image off a DVD-ROM.

One problem: it was a lousy developer experience. Most of the developers kept quiet, but a new developer let me have it: "This stinks! It's totally slow! You really expect me to show up to work every day and work eight hours on a virtual machine?" He was right, it did stink. Even with extra memory and a beefy machine, virtual machines are fine for occasional use, but they're not workable for full time development. For instance, I frequently had to shut down all other applications (Outlook, browsers, etc.) so that the virtual machine would be responsive enough that I could get work done.

Let's admit that the virtual machine emperor has no clothes on. Virtualizing machines is a neat parlor trick. It's tolerable for a few short-term uses (demonstrations, training, playing with alpha software, or testing different operating systems) but it's not a viable solution for real development.

Let's take fast computers and make them not

Resources

The problem is that virtualizing a computer in software is just incredibly inefficient. They waste system resources like crazy - they're so inefficient that they make today's multi-core machines packed full of RAM crawl. Their blessing - they let us simulate an entire machine in software - is also their curse. Simulating an entire machine in software means they're running a separate network stack, drawing video on a pretend video card, managing pretend system resources on a pretend motherboard, playing sound on pretend soundcards, etc. Even if you spend some time and / or money optimizing your virtual machine image, virtual machine images are still pigs compared to working on real machines.

Note: Machine Virtualization is a complex subject, and I'm glossing over things a little here. Virtual PC and VMWare both use native virtualization combined with virtual machine additions to leverage physical hardware as much as possible, trapping and emulating only what's necessary. So, VM's don't emulate all hardware resources, but the fact that they need to essentially filter all CPU instructions isn't a very efficient use of system resources.

Size

Then there's the problem of space. Even an optimized image with a significant amount of software - say, a development environment and a database - runs a few GB. While we can make space for them on today's large drives the problem there is in transportation and backup. Multi-GB virtual drive files are a pain to move around. And even if you do optimize them, you're keeping multiple copies of things like "C:\WINDOWS\Driver Cache\", "C:\WINDOWS\Microsoft.NET\", etc.

Updates and virus scanning

Many people mistakenly believe that they don't need to worry about safety (patches, automatic updates, virus scanners) on virtual machines since the host machine is taking care of those things. Not so. Each virtual machine with internet or network access is more than capable of becoming infected or compromised in the browser over port 80, or of spreading viruses which exploit network issues (e.g. SQL Slammer on port 1433). A virtual machine needs to be patched and protected, but since it's not on regularly it's not as likely to have been Auto-Updated. So, if you're planning to work on a virtual machine, you should plan to spend twice as much time and effort on operating system maintenance.

Host machine services

A personal pet peeve is the vampire services that VMWare runs. Yes, their VMWare Player is free, but just by installing it you install a bunch of Windows Services which autostart with your computer and run until you uninstall the VMWare Player, even if you never open a single VM.

Isolating your work environment makes it harder to get work done

By its very nature, virtualizing an environment means that your files run on what might as well be a separate computer. That means that it's difficult to take advantage of programs on the base machine. Yes, you can share clipboards and map drives to a virtual machine (while it's running), but that's about it. If, for instance, you run Outlook on the base machine and Visual Studio in a virtual machine - you end up jumping through some hoops to send the output of a program via e-mail, or view tabular data from SSMS in Excel, or add an emailed logo to your web application. These are all simple enough to get around, but add significant friction to your daily work.

Why are we virtualizing entire machines again? Isn't there a better solution?

I sure think so, or this post would just be a useless rant. I try to avoid those.

How about running unvirtualized software?

For instance, I've been developing on Visual Studio 2008 since Beta 1, and I've got it installed side by side with Visual Studio 2005. No problems. I recently upgraded to Visual Studio 2008 Beta 2 with no problems. Truth be told, this wasn't my gutsy idea - Rob Conery did it, and (as with a few other things) I followed him over the cliff - fortunately in this case it was not a real actual cliff. As software consumers, we should be able to expect software that we can trust enough, you know, actually install and use. I understand that beta software is beta software, and I'm a fan of releasing software early and often, but I should be able to expect that beta software won't trash my machine. As a side note here, I continue to be really impressed by the ever-increasing quality of Visual Studio releases.

The best way for developers to better the situation is to write software which is well compartmentalized, so we don't need to put it in an artificial container. This is commonly done by making good use of an application virtual machine (like the common language runtime, the Java language runtime, etc.). These technologies tend to steer you towards writing well isolated software, but for the sake of legacy integration they don't prevent you from going back to old, bad habits like writing to the Windows registry, modifying or installing shared resources, installing COM objects, or storing files and settings in the wrong place. Software written to run on a software virtual machine isn't guaranteed to be isolated, but it's generally more likely to play nice.

Folks talk about running under a non-administrator account, but I'm not completely convinced. While that solution does prompt you before modifying your system, it hasn't really been that helpful in my experience. Too much software just stops working under a lower privileged account. At that point you're back to the choice - install the software as an administrator or don't install it, but once you install as administrator you've pretty much said "Sure, do whatever you want to my system, please don't trash it." Hopefully software and users will both move together into an "administrator not required" world, but it's difficult as a software user to make that move before software vendors have fully embraced it.

Those other applications that won't play nice.

So what do you do with applications that insist on modifying your system? Short of the Virtual Machine Nuclear Option, is there a way to handle software which, by design, makes fundamental changes to your system?

Yes, I'm thinking of you, Internet Explorer, and I'm shaking my head. You don't play nice - IE7 won't share a computer with IE6 without a fight. I know you've got a lot of excuses, and I still don't buy them (check out this video at 1:05:30, that's me bugging the IE team about this at MIX06). To my way of thinking, IE is a browser, and if the .NET Framework folks have figured out how to run entire platforms side by side, you can manage to do that with an application which renders web pages. I felt strongly enough about this to put a significant amount of time (okay, a ridiculous amount of time) into developing and supporting workarounds - not really for my use, but for thousands of web developers (judging from the downloads and hits). I thought the IE team's suggestion to go and use VPC was... well, pretty unhelpful, especially considering that the need to run IE6 and IE7 on the same machine was to try to write forward compatible HTML which still worked with IE6's messed up rendering. I'll move off the IE example before this turns more rantlike...

The point there, I guess, is that virtual machines are sleazy hacks which users can decide to use, but software vendors should be ashamed to require. So... no matter how much we wish we could install all our applications on one machine, some applications won't play nice. As it is now, the only out we've got is to virtualize the entire machine. Is there a way to sandbox applications which won't sandbox themselves?

Waiting for an Application Virtualization host

Yes - there's a better solution which has gone almost completely unnoticed: application virtualization. I've been reading about application virtualization technology for a while, but have been frustrated with how long it's taken to actually see it in practice. The idea is to host a program in a sandbox that virtualizes just the things we don't want modified - for instance, registry and DLL's.

GreenBorder and SoftGrid are two commercial solutions to this problem. The good news and bad news about both of these programs is that they've been bought in the past year - GreenBorder was bought by Google, and SoftGrid was bought by Microsoft. The good news part of that is that the technology is backed by major, established companies; the bad news is that the technology may be folded into larger product offerings (Hello, FolderShare? You guys got eaten by SkyDrive, but the best features haven't made it over yet... And Groove, sure miss the file preview in Groove 2007...).

SoftGrid

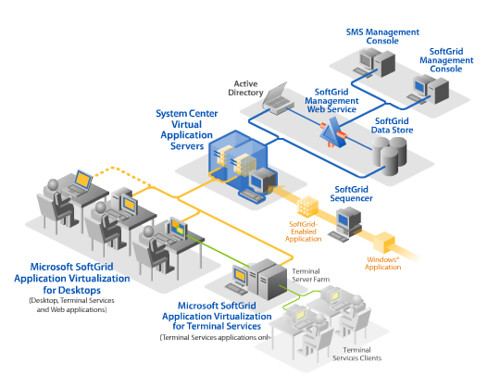

Here's a diagram from Microsoft's SoftGrid documentation:

Pretty cool, that's exactly what I'd like to be able to do. The problem (at least as I see it) is that this cool SystemGuard stuff is wrapped up inside a bigger Application Streaming system. The benefit is that existing applications can be wrapped up into packages which deploy kind of like ClickOnce applications - they're sandboxed, automatically updated, and centrally managed:

That's neat if you're looking at this as a complement to SMS from an enterprise IT point of view, but it's not helpful for individuals who just want to be able to install software on their local machine. I'm especially un-excited about application streaming after having to use Novell ZENWorks at a former job at a financial company. Virtualized Microsoft Office must sound great to IT folks, but it wasn't very productive for end users.

GreenBorder

GreenBorder takes a smaller scope - it just sandboxes browsers, making the border of the "safe" browser green (get it?).

That's a cool idea, but it's completely web-centric. I'd expect Google's use of it to stay that way. So, it's nice for safe browsing, but not helpful if you want to sandbox an arbitrary application on your computer.

Sandboxie

UPDATE: I just remembered another application virtualization program I've been meaning to look into: Sandboxie. The unregistered version of Sandboxie is free, and registration is only $25. From their site:

Sandboxie changes the rules such that write operations do not make it back to your hard disk.

The illustration shows the key component of Sandboxie: a transient storage area, or sandbox. Data flows in both directions between programs and the sandbox. During read operations, data may flow from the hard disk into the sandbox. But data never flows back from the sandbox into the hard disk.

If you run Freecell inside the Sandboxie environment, Sandboxie reads the statistics data from the hard disk into the sandbox, to satisfy the read requested by Freecell. When the game later writes the statistics, Sandboxie intercepts this operation and directs the data to the sandbox.

If you then run Freecell without the aid of Sandboxie, the read operation would bypass the sandbox altogether, and the statistics would be retrieved from the hard disk.

The transient nature of the sandbox makes it is easy to get rid of everything in it. If you were to throw away the sandbox, by deleting everything in it, the sandboxed statistics would be gone for good, as if they had never been there in the first place.

I'll have to give that a try the next time I want to test something... but since I'm completely sure it'll work well, should I test it in a Virtual Machine? Hmm...

MORE UPDATES:

Xenocode - I got an e-mail from Kenji Obata at Xenocode, letting me know that Xenocode virtualizes applications inside of standalone EXE's. It looks like a well thought out solution, but a single developer license costs $499. This is worth a look for professional or enterprise application virtualization without buying into the whole SoftGrid style application streaming deployment thing.

In the funny timing department, my copy of Redmond Magazine just arrived today. The September issue has an overview of application virtualization, and compares Altiris Software Virtualization Solution (SVS) with LANDesk Application Virtualization.

Let me know if there are more Application Virtualization systems I've missed, or if you've used any of these please comment with your experiences.