Archives

-

Azure: Announcing New Real-time Data Streaming and Data Factory Services

The last three weeks have been busy ones for Azure. Two weeks ago we announced a partnership with Docker to enable great container-based development experiences on Linux, Windows Server and Microsoft Azure.

Last week we held our Cloud Day event and announced our new G-Series of Virtual Machines as well as Premium Storage offering. The G-Series VMs provide the largest VM sizes available in the public cloud today (nearly 2x more memory than the largest AWS offering, and 4x more memory than the largest Google offering). The new Premium Storage offering (which will work with both our D-series and G-series of VMs) will support up to 32TB of storage per VM, >50,000 IOPS of disk IO per VM, and enable sub-1ms read latency. Combined they provide an enormous amount of power that enables you to run even bigger and better solutions in the cloud.

Earlier this week, we officially opened our new Azure Australia regions – which are our 18th and 19th Azure regions open for business around the world. Then at TechEd Europe we announced another round of new features – including the launch of the new Azure MarketPlace, a bunch of great network improvements, our new Batch computing service, general availability of our Azure Automation service and more.

Today, I’m excited to blog about even more new services we have released this week in the Azure Data space. These include:

-

Azure: New Marketplace, Network Improvements, New Batch Service, Automation Service, more

Today we released a major set of updates to Microsoft Azure. Today’s updates include:

-

Docker and Microsoft: Integrating Docker with Windows Server and Microsoft Azure

I’m excited to announce today that Microsoft is partnering with Docker, Inc to enable great container-based development experiences on Linux, Windows Server and Microsoft Azure.

Docker is an open platform that enables developers and administrators to build, ship, and run distributed applications. Consisting of Docker Engine, a lightweight runtime and packaging tool, and Docker Hub, a cloud service for sharing applications and automating workflows, Docker enables apps to be quickly assembled from components and eliminates the friction between development, QA, and production environments.

Earlier this year, Microsoft released support for Docker containers with Linux on Azure. This support integrates with the Azure VM agent extensibility model and Azure command-line tools, and makes it easy to deploy the latest and greatest Docker Engine in Azure VMs and then deploy Docker based images within them.

Docker Support for Windows Server + Docker Hub integration with Microsoft Azure

Today, I’m excited to announce that we are working with Docker, Inc to extend our support for Docker much further. Specifically, I’m excited to announce that:

1) Microsoft and Docker are integrating the open-source Docker Engine with the next release of Windows Server. This release of Windows Server will include new container isolation technology, and support running both .NET and other application types (Node.js, Java, C++, etc) within these containers. Developers and organizations will be able to use Docker to create distributed, container-based applications for Windows Server that leverage the Docker ecosystem of users, applications and tools. It will also enable a new class of distributed applications built with Docker that use Linux and Windows Server images together.

-

Azure: Redis Cache, Disaster Recovery to Azure, Tagging Support, Elastic Scale for SQLDB, DocDB

Over the last few days we’ve released a number of great enhancements to Microsoft Azure. These include:

- Redis Cache: General Availability of Redis Cache Service

- Site Recovery: General Availability of Disaster Recovery to Azure using Azure Site Recovery

- Management: Tags support in the Azure Preview Portal

- SQL DB: Public preview of Elastic Scale for Azure SQL Database (available through .NET lib, Azure service templates)

- DocumentDB: Support for Document Explorer, Collection management and new metrics

- Notification Hub: Support for Baidu Push Notification Service

- Virtual Network: Support for static private IP support in the Azure Preview Portal

- Automation updates: Active Directory authentication, PowerShell script converter, runbook gallery, hourly scheduling support

All of these improvements are now available to use immediately (note that some features are still in preview). Below are more details about them:

Redis Cache: General Availability of Redis Cache Service

I’m excited to announce the General Availability of the Azure Redis Cache. The Azure Redis Cache service provides the ability for you to use a secure/dedicated Redis cache, managed as a service by Microsoft. The Azure Redis Cache is now the recommended distributed cache solution we advocate for Azure applications.

Redis Cache

Unlike traditional caches which deal only with key-value pairs, Redis is popular for its support of high performance data types, on which you can perform atomic operations such as appending to a string, incrementing the value in a hash, pushing to a list, computing set intersection, union and difference, or getting the member with highest ranking in a sorted set. Other features include support for transactions, pub/sub, Lua scripting, keys with a limited time-to-live, and configuration settings to make Redis behave more like a traditional cache.

Finally, Redis has a healthy, vibrant open source ecosystem built around it. This is reflected in the diverse set of Redis clients available across multiple languages. This allows it to be used by nearly any application, running on either Windows or Linux, that you host inside of Azure.

Redis Cache Sizes and Editions

The Azure Redis Cache Service is today offered in the following sizes: 250 MB, 1 GB, 2.8 GB, 6 GB, 13 GB, 26 GB, 53 GB. We plan to support even higher-memory options in the future.

Each Redis cache size option is also offered in two editions:

- Basic – A single cache node, without a formal SLA, recommended for use in dev/test or non-critical workloads.

- Standard – A multi-node, replicated cache configured in a two-node Master/Replica configuration for high-availability, and backed by an enterprise SLA.

With the Standard edition, we manage replication between the two nodes and perform an automatic failover in the case of any failure of the Master node (because of either an un-planned server failure, or in the event of planned patching maintenance). This helps ensure the availability of the cache and the data stored within it.

Details on Azure Redis Cache pricing can be found on the Azure Cache pricing page. Prices start as low as $17 a month.

Create a New Redis Cache and Connect to It

You can create a new instance of a Redis Cache using the Azure Preview Portal. Simply select the New->Redis Cache item to create a new instance.

You can then use a wide variety of programming languages and corresponding client packages to connect to the Redis Cache you’ve provisioned. You use the same Redis client packages that you’d use to connect to your own Redis instance as you do to connect to an Azure Redis Cache service. The API + libraries are exactly the same.

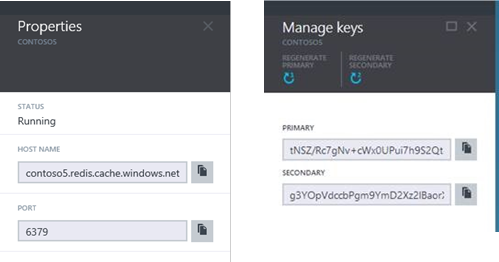

Below we’ll use a .NET Redis client called StackExchange.Redis to connect to our Azure Redis Cache instance. First open any Visual Studio project and add the StackExchange.Redis NuGet package to it, with the NuGet package manager. Then, obtain the cache endpoint and key respectively from the Properties blade and the Keys blade for your cache instance within the Azure Preview Portal.

Once you’ve retrieved these, create a connection instance to the cache with the code below:

var connection = StackExchange.Redis.ConnectionMultiplexer.Connect("contoso5.redis.cache.windows.net,ssl=true,password=...");