The Low Down Dirty Azure Blues

Remember the SETI screen savers that used to be on everyone's computer? As far I as know, it was the first bona-fide use of "Cloud" computing…albeit an ad hoc cloud. I still think it was a brilliant leveraging of computing power.

My interest in clouds was re-piqued when I went to a technical seminar at the local .Net User Group. The speaker was Mike Benkovitch and he expounded magnificently on the virtues of the Azure platform. Mike always does a good job. One killer reason he gave for cloud computing is instant scalability. Not applicable for most applications, but it is there if needed.

I have a bunch of files stored on Microsoft's SkyDrive platform which is cloud storage. It is painfully slow. Accessing a file means going through layers and layers of software, redirections and security. Am I complaining? Hell no! It's free!

So my opinions of Cloud Computing are both skeptical and appreciative.

What intrigued me at the seminar, in addition to its other features, is that Azure can serve as a web hosting platform. I have a client with an Asp.Net web site I developed who is not happy with the performance of their current hosting service. I checked the cost of Azure and since the site has low bandwidth/space requirements the cost would be competitive with the existing host provider: Azure Pricing Calculator.

And, Azure has a three month free trial. Perfect! I could try moving the website and see how it works for free.

I went through the signup process. Everything was proceeding fine until I went to the MS SQL database management screen. A popup window informed me that I needed to install Silverlight on my machine.

Silverlight? No thanks. Buh-Bye.

I half-heartedly found the Azure support button and logged a ticket telling them I didn't want Silverlight on my machine. Within 4 to 6 hours (and a myriad (5) of automated support emails) they sent me a link to a database management page that did not require Silverlight.

Thanks!

I was able to create a database immediately. One really nice feature was that after creating the database, I was given a list of connection strings. I went to the current host provider, made a backup of the database and saved it to my machine. I attached to the remote database using SQL Server Studio 2012 and looked for the Restore menu item. It was missing. So I tried using the SQL command:

RESTORE DATABASE MyDatabase

FROM DISK ='C:\temp\MyBackup.bak'

Msg 40510, Level 16, State 1, Line 1

Statement 'RESTORE DATABASE' is not supported in this version of SQL Server.

Are you kidding me? Why on earth…? This can't be happening!

I opened both the source database and destination database in SQL Management Studio. I right clicked the source database, selected "Tasks" and noticed a menu selection called "Deploy Database to SQL Azure"

Are you kidding me? Could it be? Oh yes, it be!

There was a small problem because the database already existed on the Azure machine, I deployed to a new name, deleted the existing database and renamed the deployed database to what I needed. It was ridiculously easy.

Being able to attach SQL Management Studio to remote databases is an awesome but scary feature. You can limit the IP addresses that can access the database which enhances security but when you give people, any people, me included, that much power, one errant mouse click could bring a live system down. My Advice: Code softly and carry a large backup thumb-drive.

Then I created a web site, the URL it returned look something like this: http://MyWebSite.azurewebsites.net/

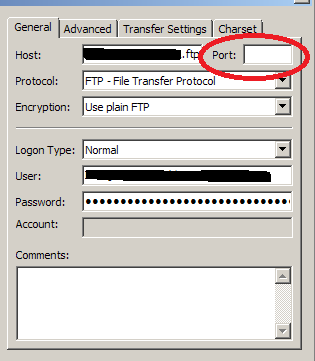

Azure supports FTP, but I couldn't figure out the settings until I downloaded the publishing profile. It was an XML file that contained the needed information.

I still couldn't connect with my FTP client (FileZilla). After about an hour of messing around, I deleted the port number from the FileZilla setup page….and voila, I was in like Flynn.

There are other options of deploying directly from Visual Studio, TFS, etc. but I do not like integrated tools that do things without my asking: It's usually hard to figure out what they did and how to undo it.

I uploaded the aspx , cs , webconfig, etc. files.

Bu it didn't run. The site I ported was in .NET 3.5. The Azure website configuration page gave me a choice between .NET 2.0 and 4.0. So, I switched to Visual Studio 2010, chose .NET 4.0 and upgraded the site. Of course I have the original version completely backed up and stored in a granite cave beneath the Nevada desert. And I have a backup CD under my pillow.

The site uses ReportViewer to generate PDF documents. Of course it was the wrong version. I removed the old references to version 9 and added new references to version 10 (*see note below). Since the DLLs were not on the Azure Server, I uploaded them to the bin directory, crossed my fingers, burned some incense and gave it a try. After some fiddling around it ran. I don't know if I did anything particular to make it work or it just needed time to sort things out.

However, one critical feature didn't work: ReportViewer could not programmatically generate PDF documents. I was getting this exception: "An error occurred during local report processing. Parameter is not valid."

Rats.

I did some searching and found other people were having the same problem, so I added a post saying I was having the same problem: http://social.msdn.microsoft.com/Forums/en-US/windowsazurewebsitespreview/thread/b4a6eb43-0013-435f-9d11-00ee26a8d017

Currently they are looking into this problem and I am waiting for the results. Hence I had the time to write this BLOG entry. How lucky you are.

This was the last message I got from the Microsoft person:

Hi Steve,

Windows Azure Web Sites is a multi-tenant environment. For security issue, we limited some API calls. Unfortunately, some GDI APIS required by the PDF converting function are in this list.

We have noticed this issue, and still investigation the best way to go. At this moment, there is no news to share. Sorry about this.

Will keep you posted.

If I had to guess, I would say they are concerned with

people uploading images and doing intensive graphics

programming which would hog CPU time. But that is just a

guess.

Another problem.

While trying to resolve the ReportViewer problem, I tried to write a file to the PDF directory to see if there was a permissions problem with some test code:

String MyPath = MapPath(@"~\PDFs\Test.txt");

File.WriteAllText(MyPath, "Hello Azure");

I got this message: Access to the path <my path> is denied.

After some research, I understood that since Azure is a cloud based platform, it can't allow web applications to save files to local directories. The application could be moved or replicated as scaling occurs and trying to manage local files would be problematic to say the least.

There are other options:

- Use the Azure APIs to get a path. That way the location of the storage is separated from the application. However, the web site is then tied Azure and can't be moved to another hosting platform.

- Use the ApplicationData folder (not recommended).

- Write to BLOB storage.

- Or, I could try and stream the PDF output directly to the email and not save a file.

I'm not going to work on a final solution until the

ReportViewer is fixed. I am just sharing some of the things

you need to be aware of if you decide to use Azure. I got

this information from

here. (Note the author of the BLOG added a comment saying he

has updated his entry).

Is my memory faulty?

While getting this BLOG ready, I tried to write the test file again. And it worked. My memory is incorrect, or much more likely, something changed on the server…perhaps while they are trying to get ReportViewer to work. (Anyway, that's my story and I'm sticking to it).

*Note: Since Visual Studio 2010 Express doesn't include a Report Editor, I downloaded and installed SQL Server Report Builder 2.0. It is a standalone Report Editor to replace the one not in Visual Studio 2010 Express.

I hope someone finds this useful.

Steve Wellens