Archives

-

Sharing session between ASP Classic and ASP.NET using ASP.NET Session state server

The different session state used by ASP Classic and ASP.NET is the most significant obstacle in ASP and ASP.NET interoperability. I started an open source project called NSession with the goal to allow ASP Classic to access ASP.NET out-of-process session stores in the same way that ASP.NET accesses them, and thus share the session state with ASP.NET.

How does ASP.NET access session state?

The underpinning of ASP.NET session state was discussed in detail by Dino. ASP.NET retrieves the session state from the session store and de-serializes it into an in-memory session dictionary at the beginning of page request. If the page requests for a read-writable session, ASP.NET will lock the session exclusively until the end of the page request, and then serialize the session state into the session store and release the lock. If a page requests for a read-only session, session state is retrieved without an exclusive lock.

We intend to mimic the behavior of ASP.NET in ASP Classic.

How does NSession work?

You need to instantiate one of the COM objects in your ASP classic page before it accesses session state, either:

set oSession = Server.CreateObject("NSession.Session")

or

set oSession = Server.CreateObject("NSession.ReadOnlySession")

If you instantiate NSession.Session, the session state in the session store will be transferred to the ASP Classic session dictionary, and an exclusive lock will be placed in the session store. You do not need to change your existing code that accesses the ASP Classic session object. When NSession.Session goes out of scope, it will save the ASP Classic session dictionary back to the session store and release the exclusive lock.

If you have done with the session state, you can release the lock early with

set oSession = Nothing

If you instantiate NSession.ReadOnlySession, the session state in the session store will be transferred to the ASP Classic session dictionary but no locks will be placed.

Installation

You may download the binary code from the Codeplex site. There are 2 DLLs to register. The first dll NSession.dll is a .net framework 4.0 dll and it is independent of 32 or 64 bit environment. Since it is accessed by ASP Classic, it needs to be registered as a COM object using RegAsm. It also needs to be place in global assembly cache using gacutil. You need .NET framework 4 on the machine but you can run any version of ASP.NET as the DLL is not loaded into the same AppDomain as the ASP.NET application. The reason that I chose .NET framework 4.0 is that I can use the C# dynamic feature to cut down the reflection.

The second dll NSessionNative.dll is a C++ dll and is platform dependent. You need to register the appropriate version depending on whether you are running the 32 or 64 bit application pool. The reason that we need a C++ dll is that we need deterministic finalization to serialize the session at the end of page request. We chose C++ over VB6 because it can create both 32 bit and 64 bit COM objects.As this is still a beta ware, I suggest that you use it like any pre-release software. Please report any bug and issue to the project site forum.

Future works

As this time, I have only implemented the code to access ASP.NET state server. The following are some of the ideas that I have in mind. Throw in yours in the Issue Tracker at the project site.

1. Support Sql Server session store.

2. Optimization. ASP.NET caches some configuration and objects. NSession could use the idea too.

3. Configuration setting to allow clearing of ASP Classic session dictionary and the end of page request.

4. Implement a filter mechanism. The ASP.NET may store some objects that do not make sense for ASP Classic and vice versa. A filter mechanism can be used to filter out these objects.

-

Gave back-to-back presentations on Javascript at SoCal Code Camp today

Today, I gave two back to back presentations on Javascript at SoCal Code Camp earlier today. The titles are:

The presentation material can be downloaded here.

-

I was asked to install .net framework 3.5 even though I already have it on my machine

Today, when I try to install a Click Once application, I was asked to install .net framework 3.5 even though I already have it on my machine. It turns out that the web application relies on the User-Agent header to determine if I have .net framework 3.5. However, IE9 no longer sends feature token as part of User-Agent. Instead, feature tokens are included in the value returned by the userAgent property of the navigator object. The work around? Just set the IE9 to IE8 compatibility mode and the website is happy.

-

5 minutes guide to exposing .NET components to COM clients

I occasionally write COM objects in .NET. I have to remind myself the right things to do every time. Finally, I just decide to write it up so I don’t have to do the same research again.

If you try to register a .NET component as COM using regasm tool, by default, the tool will expose all exposable public classes. The exposable mean that the class must satisfy some rules such as the type must have a public default constructor. For complete list of rules, see MSDN.

The Visual Studio template for class libraries actually turn off the COM visibility in the AssemblyInfo.cs file using the assembly attribute [assembly: ComVisible(false)]. This is a good practice as we do not want to flood the registry with all the types in our library.

Next, we need to apply ComVisible(true) attribute to all classes and interfaces that we want to expose. It is a good practice to apply the attribute [ClassInterface(ClassInterfaceType.None)] to classes and implement interfaces explicitly. Why? That is because by default .NET will automatically create a class interface to contain all exposable methods. We do not have much control of the interface. So it is a good idea to turn off the class interface and control our interfaces explicitly.

Lastly, it is a good idea to explicitly apply Guid attributes to classes and interfaces. This helps avoiding adding lots of orphan ClassId entries in the registry in our development machine. Visual Studio has Create GUID tool right under the Tools menu.

This is just a brief discussion and is no substitution for MSDN. Adam Nathan wrote an ultimate book called .NET and COM. This book was out of print for a while so even a used one was very expensive. Fortunately, the publisher reprinted it. Adam has also some nice articles on InformIT (need to click the Articles tab).

-

Take pain out of Windows Communication Foundation (WCF) configuration (2)

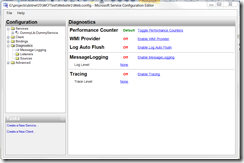

I am continuing my previous part. I am going to discuss how to trouble shoot WCF problems by using WCF tracing and message logging. Again I am going to use Microsoft Service Configuration Editor discussed in the previous part. When you click the Diagnostics node on the left, you will see the following:

You would want to enable tracing and message logging. If you want to see the log entries immediately, you also want to enable log auto flush. What is the difference between tracing and message logging? Tracing captures the life cycle of WCF service call while the message logging capture the message. If you get a message deserialization error, you would need message logging to inspect the message. Once enabling the logging, you need to go to each listener to configure the log location and go to each source to configure the trace level. If you want to log the entire soap message, go Message Logging node and set LogEntireMessage to true. MSDN has a nice article on recommended settings for development and production environments.

Also, do not change the .svclog file extension when you specify the log file. This is a registered extension for the WCF tracing log file. If you double click a log file, another tool in the same directory of configuration editor called SvcTraceViewer will show up and display the contents of the log.

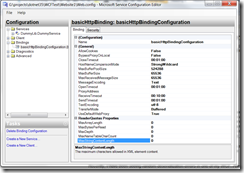

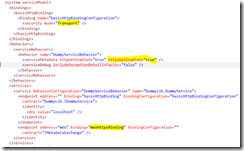

Recently, I have been seeing random deserialization errors in one of my WCF. After turning on full message logging, I was able to inspect the full soap message, and I see nothing wrong with the message. So what is the problem? By default, to prevent denial-of-service attack, WCF has some limits on message length, array length, string length. This can be configured in the binding configuration:

The default limit for the size of string parameter is 8192 bytes. There are clients sending string longer than that. So I had to increate the MaxStringContentLength attribute under the ReaderQuotas property. For the default of other quotas, please visit this page on MSDN.

WCF is big. I cannot discuss all the various configuration options. There are no substitutions for MSDN or a good book. I hope I have covered some common scenarios for help you get through the troubles quickly without spending lots of time navigating the MSDN documents.

-

Take pain out of Windows Communication Foundation (WCF) configuration (1)

WCF is a very flexible technology. The flexibility comes with complexity; there are many configurable pieces. It is usually relative easier to start with an ASP.NET project and add WCF service to it. However, as we start changing things and deploying web service to production, many unexpected problem could occur. I have written many WCF web services in my life but I don’t spend high enough percentage of my time working WCF. So I went through the same struggle again each time when I work with WCF. So I thought I would start documenting the experience to stop the pain.

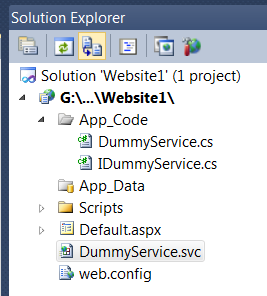

If I started with an empty website called website1 and add a WCF service called DummyService, Visual Studio will added the DummyService.svc as well as the associated interface and implementation in the App_Code directory.

The contents of the .svc file looks like:

Everything works fine and makes sense, but both the service interface and implementation class lives in the global namespace. Now supposing I like to put business logic in a library project, I add another library project with namespace DummyLib and move the interface and implementation into the library project. I add the reference to System.ServiceModel in my DummyLib project and add a project reference to DummyLib into my website. Now I need to change my .svc file. Obvious, I do not need the CodeBehind attribute any more as my code is compiled by my library project. How do I reference the class? According to MSDN, the Service attribute should point to "Service, ServiceNamespace". Unfortunately, that does not work. Fortunately, like any other place where a type is referenced, I can use a type name like “TypeName, AssemblyName, etc”. Look at the web.config file and look for a type attribute and you will know what I am talking about. So now I .svc file looks like:

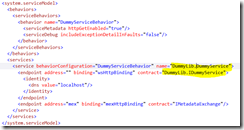

Is it enough? No. WCF actually stores very few information in the class and stores lots information in the web.config; that is how it could be configurable. So we need to modify the web.config to reference both the namespaced interface and namespaced class (two changes).

Now it is time to examine our web.config file. There is a serivce element that points to our implementation type. The service exposes 2 end points: one is our service end point; the “mex” endpoint if for retrieving meta data, in our case, WSDL. The web service end points to the wsHttpBinding and the mex endpoint poinsts to the mexHttpBinding. Finally, the service point to a behavior that is HttpEnabled and does not include Exception Details in fault. Confused? It is not easy to create configuration like this from scratch, but once the Visual Studio created this for us, we can feel our way to move around a bit. Fortunately, Windows SDK has a really nice tool called SvcConfigEditor. It is normally in C:\Program Files\Microsoft SDKs\Windows\v7.0A\Bin or C:\Program Files (x86)\Microsoft SDKs\Windows\v7.0A\Bin. If I open my web.config file with SvcConfigEditor, and look at the choice for end point binding:

There are lots of choices. If we work with SOAP, two common choices are basicHttpBinding and wsHttpBinding. basicHttpBinding supports an older standard of SOAP. It does not support message level security, but is very compatible with all kinds of clients. wsHttpBinding supports newer SOAP standard and many of the WS* extensions. Now supposing we have legacy clients so we need to use basicHttpBinding. We also want to use Https to secure us at the transport level. Let us see how many changes that we need to make.

Firstly, basicHttpBinding does not support https by default. We need to create a basicHttpBindingConfiguration to change the default. So I click the Bindings node on the left and then add a binding configuration calls basicHttpBindingConfiguration. Then I can click the Security tab and then the Mode from “None” to “Transport”. Now I can return to the end point and the configuration I just created is available to select in the BindingConfiguration attribute. Next, we need to go to the behavior, and add HttpsGetEnabled=”True”. Lastly, we need to go to the “mex” endpoint to change binding from mexHttpBinding to mexHttpsBinding. So to change from http to https, we need to make 3 modifications and our web.config now looks like:

In the next part, I will talk about WCF tracing and message logging. It really makes trouble shooting easier.

-

Sharing session state over multiple ASP.NET applications with ASP.NET state server

There are many posts about sharing session state using Sql Server, but there are little information with out-of-process session state. The primary difficulty is that there is not an official way. While the source code for the Sql Server Session State is available in ASP.NET provider toolkit, there are scarce information on state server. The method introduced in this post is based on both public information as well as a little help from Reflector. I should warn that this method is considered as hacking as I use reflection to change private member. Therefore, it will not in partially trusted environment. Future version of the OutOfProcSessionStateStore class could break the compatibility too; users should use the principle discussed in the post to find the solution.

I will first discuss how ASP.NET state server used by out-of-proc session state works. According to the published protocol specification, ASP.NET state server is essentially a mini HTTP Server talking in customized HTTP. The key that ASP.NET used to lookup data is composed of an application id from HttpRuntime.AppDomainAppId, a hush of it and the SesionId stored in cookie. This is very similar to the Sql Server session state. Developers has been using reflection to modify the _appDomainAppId private variable before it is used by the SqlSessionStateStore. However, this method does not work with OutOfProcSessionStateStore because the way it was written.

When the ASP.NET session state http module is loaded, it uses the mode attribute of the sessionState element in the Web.Config file to determine which provider to load. If the mode is “StateServer”, the http module will load OutOfProcSessionStateStore and initialize it. The partial key it constructed is stored in the s_uribase private static variable. Since SessionStateModule loads very early in the pipeline, it is difficult to change the _appDomainAppId variable before OutOfProcSessionStateStore is loaded.

The solution is to change modify the data in OutOfProcSessionStateStore using reflection. According to ASP.NET life cycle, an asp.net application can actually load multiple HttpApplication instances. For each HttpApplication instance, ASP.NET will load the entire set of http modules configured in machine.config and web.config without specific order. After loading the http modules, ASP.NET will call the init method of the HttpApplication class, which can be subclassed in global.asax. Therefore, we can access the loaded OutOfProcSessionStateStore in the init method to modify it so that all the ASP.NET applications use the same application id to access the state server. ASP.NET is already good at keeping the sessionid the same cross applications. If this is not enough, we can supply a SessionIdManager.

So, we just need to place the following code in global.asax or code-behind:

As you can see, this code works purely because we hacked according to the way OutOfProcSessionStateStore was written. Future changes of OutOfProcSessionStateStore class may break our code, or may make our life harder or easier. I hope future version of ASP.NET will make it easier for us to control the application id used against the state server. If fact, Windows Azure already has a session state provider that allows sharing session state across applications.

Edited on 10/17/2011:

The previous version of the code only works in IIS6 or IIS7 classic mode. I made a change so that the code works in IIS7 integrated mode as well. What happen is that in IIS7 when Application.Init() is called, the module.init() is not yet called. So the _store variable is not yet initialized. We could change the _appDomainAppId of the HttpRuntime at this time as it will be picked up later when the session store is initialized. The session store will use a key in the form of AppId(Hash) to access the session. The Hash is generated from the AppId and the machine key. To ensure that all applications use the same key, it is important to explicitely specify the machine key in the machine.config or the web.config rather than using auto generated key. See http://msdn.microsoft.com/en-us/library/ff649308.aspx for details and especially scroll down to the section "Sharing Authentication Tickets Across Applications".

-

Theory and Practice of Database and Data Analysis (4) – Capturing additional information on relations

So far in this series, I have been talking about traversing related data by navigating down the referential constraints. The physical database makes no distinction about the nature of the foreign key, whether it is parent-child relationship or another type of reference. In logical ER modeling, we often capture more information. So in this blog, I will explore the idea of capturing additional information so that we can build more powerful tools.

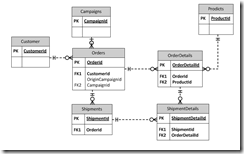

Let me further refrain myself by using the fictitious e-commerce company example introduced in the part 1 of this series: We have an Orders table to capture the order header and OrderDetails table to capture the line items. An order must be placed by a customer, and may or may not originates from marketing campaign. An order may be fulfilled by one or more shipments. Each shipment would ship order line items in part or in full. So we have an ER diagram likes the following:

In the ER diagram, there is a clear parent-child relationship between Orders and OrderDetails and between Shipdments and ShipmentDetails. If we send the data in an XML file, each OrderDetail element would be a child of an Order element and each ShipmentDetail element would be a child element of an Shipment element. However, it appears that ShipmentDetails table has two parents: Shipments and OrderDetails. We will pick the parent by the importance of the relationship so we will pick Shipments as the parent. We then need to establish an additional mechanism to store the cross references between ShipmentDetails and OrderDetails in our XML file.

Let us examine other relations. Customer is a mandatory attribute for the Order table and Product is a mandatory attribute to the OrderDetails. Campaign is an optional attribute of the Orders. We can further classify as Products as system table and Customers as data table.

So we have classified the relations in our simple example into several categories: parent-child, cross-reference, mandatory attribute to system table, mandatory attribute to data table and optional attribute.

Now let us use an example to see how such classification can help us. Supposing we are building a tool to export all data related to a transaction from our production database into an XML and then import into our development database. The ID of an element could change from one system to another. That is not a problem with parent-child as we will get a new key when we insert the parent and we can propagate that down to the children. However, we need to take extra care to ensure that the cross reference is maintained.

We do not have to worry about the system table but we do have to worry about the data table. Our development database may or may not have the same customer. So from our classification, we can generically determine that we need to get a copy of mandatory reference to a data table and its children to check for existence in the destination database.

Besides categorization, other information that we like to capture are one-to-one relationships, some of them can be inferred; if a referential constraint references to primary key in both tables, it has a one-to-one relationship. We also like to capture hidden relationship; they are real references but cannot be enforced by foreign key constraint in Sql Server. For example, some tables contain an ID column that can store multiple types of IDs; a separate column is used to indicate the type of ID stored in the ID column.

With human input of extra information beyond those we can extracted from physical databases, we shall be able to build better tools. I will explorer some tools in upcoming blogs of this series.