End time: 6 hours

I'm ditching my PDA (currently a BlackBerry Curve) and my laptop for note taking and going retro.

For the past few years I've been taking notes in various digital media. Back when I had my Palm, I would do handwritten notes (sometimes trying to fight that silly PalmScript recognizer). When I had a tablet available, I would do the same in OneNote and recently I just fill up OneNote pages with notes and scribbles (all typed in). The biggest issue I have with digital note taking is, while it's fast on data entry, it's horrible in capturing intent and nuances of information.

Recently I got inspired by a concept Mike Rhodes seems to have earmarked back in 2007 called "sketchnotes". It's the idea of capturing hand-written notes in a book, much like how you would scribble notes in class if you were that sort of person, adding in images and enhancing the notes with fonts and flair. I remember those days as I would use my sketch book for pretty much all notes rather than a traditional lined book (I went to a Vocational Art School so 70% of my classes were art based and thus I had dozens of sketchbooks for use throughout the year).

Sketchnotes are just like those days of taking notes in art class, except now I use them in corporate meetings, conferences, and impromptu get togethers at user group sessions, code camps, etc. They're much easier to lug around than a laptop (although I usually always have my laptop handy) but the best thing is they're easier to get started (it's like having a solid-state drive) and require no batteries!

The best part of sketchnotes is that you tend to flow free with the information and really focus on what's being said as you translate it into something more meaningful than just words on a page. Changing fonts is much faster than on the computer (and I can invent fonts on the fly) along with bits of flair (borders, highlighting, shadows, etc.) that punch out a concept. It's a slower process than capturing brain dumps onto OneNote and typing in information, but it lets me be more creative (something I've been lacking in the last few decades) and helps me understand the concepts that were being presented or talked about. It's also like having a whiteboard hovering around with you all the time as I can quickly do a screen mockup as the customer might be describing his/her needs which I can then just turn around and show to them "Do you mean something like this?". Fast feedback cycle, I like that.

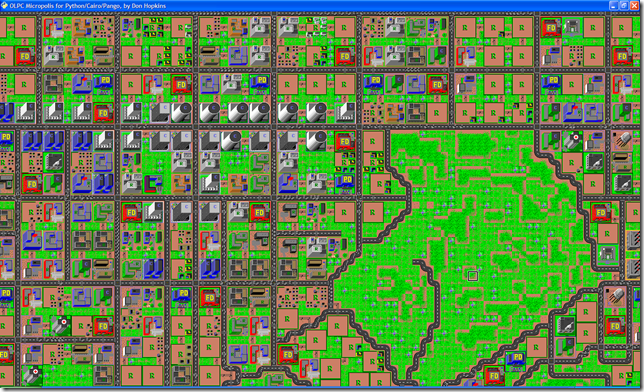

Here's an image from my current sketchnotebook (taken from the ALT.NET Open Spaces Conference in Calgary a few months ago):

The image is from my CrackBerry so not very clear. I'll be creating a new Flickr pool and uploading higher quality images as I get them scanned.

My weapons of choice are the unlined large size (5"x9") Moleskin plain soft notebook and a black Uni-Ball Vision Micro Roller Pen. The Uni-Ball bleeds ever so slightly to get that "homemade" effect (something that's missed in digital) and lets me be free with the note taking, not worring about erasing (since you can't do it anyway). Moleskine rocks and while they're more pricier than other books, they hold up under stress (and come with a cardboard holder in case I have some handout to stash).

Yes kids, you can do something like this with OneNote and a tablet PC but a) I don't always have a tablet PC b) I find my OneNote notes turn into a graveyard of information that's always being sorted and never being used and c) I still need batteries to run OneNote (not to mention an OS that takes a few minutes to boot up). I'm not saying abandon your tablets and Moleskine is king (not that anyone would listen to me anywho). I use this as an alternative and like it, but YMMV.

Anyways while it might not be a new concept for you, it's something I've been trying to get into lately and now I'm comfortable doing on a regular basis. It took a few false starts and weeks of practice to get back to scribing (and my hand still cramps up from time to time as I have to switch back and forth between paper and keyboard). Sometimes the notes are pure chicken scratch and I have a hard time deciphering them but it's the imagery and little things that make it work for me. They say a picture is worth a thousand words and I believe it. I feel like a giddy school girl again, scribbling images madly that remind me of School House Rock (and we *all* know how cool SHR is).

So goodbye digital input, it's been nice. Hand writing is back for me and it's here to stay. Maybe give it a try yourself?

I'll come out of the closet for a moment as I become a little more jaded in life and bitter this holiday season. I'm an Anti-Architect. I'm all for software architecture as the alternative is let some guy who read "Teach Yourself SharePoint Programming in 24 Hours" unleash onto an Enterprise solution and then have some high priced consultant come in and clean up the mess (or the guy that created the mess *was* a high priced consultant and now you need an even higher one to fix the problem) but while I'm an Architmatech in some sense of the word, I'm also a developer at heart and a creator in essence. I'm a little bit Country, I'm a little bit Rock and Roll.

I was looking over some of the dated material on Microsoft's Architecture Certification Program and found their role definitions here. One of the issues with the IT world is that MSFT publishes some white paper, document, or scans a napkin and IT managers flock to it like flies on dung, spouting as the Gospel and Word and declaring that everyone follow it blindly. If Microsoft wrote it, it must be right. Right? Maybe. Some stuff they get right, others they're way off base.

Here are my top 10 reasons into what makes an Anti-Architect (for lack of a better term)

These are some ideas around being what I call an Anti-Architect. Use them as you see fit, YMMV.

Microsoft has put together another version (this is rev 4) of their WPF Application Quality Guide, a fairly complete set of tasks and ideas around writing good quality apps using the Windows Presentation Framework.

http://windowsclient.net/wpf/white-papers/wpf-app-quality-guide.aspx

A few things to note:

Overall it's a good place to start when looking for resources on writing WPF apps and has some great links on real "Best Practices" for designers and developers. Check it out.

(thanks to Greg and The Oren Machine for comments that I've incorporated into this post)

Where do I begin? There's so much stuff in my head I'm pretty much going to explode and leave little gray bits of matter all over my cubicle this morning.

Free Range

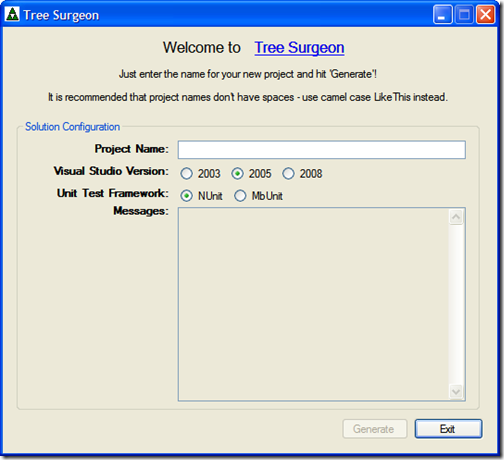

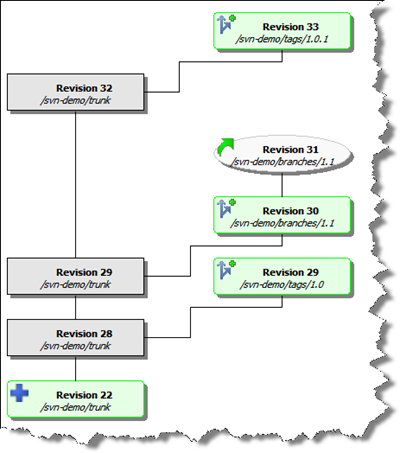

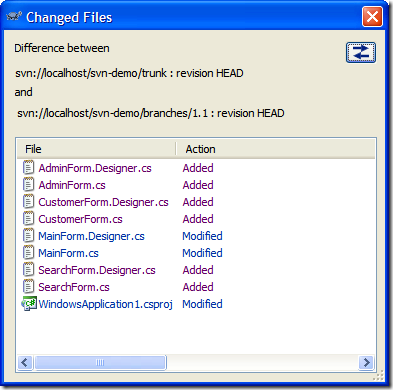

First off, I'm now a Free Range Chicken. As the economy slumps and oil hits a new low, living in an Oil and Gas city has it's merits but it also has it's drawbacks. With a whack of new projects being cut from Petro Canada's budget my major client right now has to let me go. They've given me by the end of the year to finish up work which is super cool of them, rather than being led out of the office last week. Of course the next couple of weeks I'm doing a massive brain dump to the internal developer wiki to make sure everyone is up to speed on where things are and how we've been doing things. It's been an awesome road here as I started a couple of years ago with them doing taxonomy and SharePoint setup but then the last couple of years it's been primarily focused on Agile (mostly Scrum with some Kanban lately) mentoring and setup. The teams are focused now with everyone writing unit tests and practicing TDD; projects being generated initially from TreeSurgeon; Subversion over TFS for a source control system; and a whack of other new stuff that I'm proud to have been a key initiator of. Looking back it's almost draconian in how things were being done (no tests, VSS; old-style code-behind web sites with [ack] SQL statements in code, etc.).

While I am a Scrum/Agile practitioner coach and mentor and all-around SharePoint guy I considered a few other ideas for a new career:

In any case, I'm out and about and hungry like a wolf. Ring me if you're interested.

TechDays

TechDays kicks off this week in Calgary at the Round-up Centre. I'll be doing two sessions on WPF with the details below. Hope to see you there!

Building Differentiated UI Applications Using WPF

The Patterns & Practices Smart Client Software Factory includes a set of guidance for developing composite smart client applications in WinForms. With the release of WPF, there is a need for bringing the Composite Client scenario to the framework. This includes supporting a new Differentiated UX experience. The "Acropolis" incubation project, which has now been folded into an upcoming platform release, is the future, but what is the answer for customers adopting WPF today? Come to this session and find out about new Composite WPF (CWPF) client guidance that Patterns & Practices are developing jointly with the UIFX team. We get in the driver’s seat and take CWPF out on the road to see what she's made of. We dive into demos, check out the new APIs, and talk about the features.

The Best of Both Worlds: WPF in WinForms and Vice Versa

While Windows Presentation Foundation (WPF) is a compelling new framework for building rich UI applications, a wholesale adoption of WPF is not always the best solution for current application development. You may have an existing investment in Windows Forms controls that you don't want to give up or a complex application that you just want to add some new visual features to. This session shows you how to leverage existing investments in capabilities in Windows Forms while taking maximum advantage of what WPF has to offer. Learn how to embed WPF controls in Windows Forms apps, Windows Forms controls in WPF apps, and see how the designer supports you for developing these hybrids. Also, learn how to best architect your UI applications to take advantage of these capabilities.

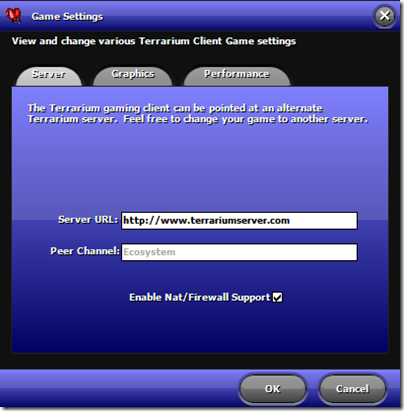

Terrarium

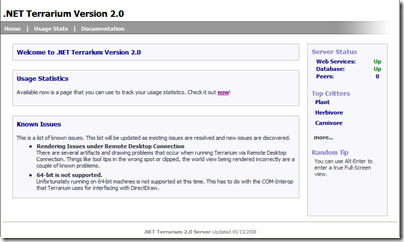

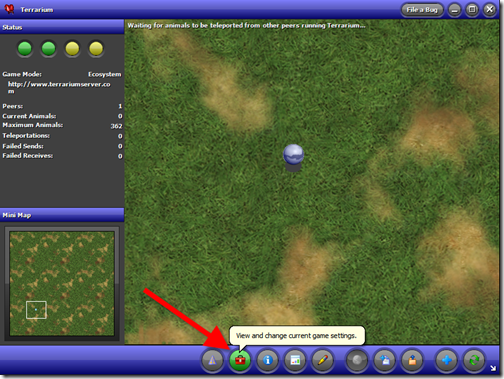

Terrarium is kicking up a few dozen notches. I had a great discussion with Eric Zinda last week on the phone about the origins of Terrarium and challenges the team had. Remember, this stuff was created back in the 1.x days (well, prior to the public release) so web services were new, code access security was virtually unknown (even to the Terrarium team) and here these guys were flinging .NET assemblies across the wire to run on a remote system. Overall, as I've said in presentations on Terrarium, it's a kick-butt demo app like no other. Web Services; Code Access Security; DirectX Client and WinForms integration; Peer to Peer networking. If you look at any n-tier LOB application today it probably doesn't contain half the features baked into Terrarium. Really. While it's connned a "game", it's a distributed n-tier application that has some pretty impressive stats on performance (at one point, most of Microsoft was running it internally as screen savers all slamming the server with little or no effect).

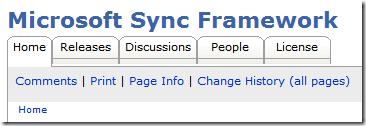

In any case, work has begun on WPF clients and new WinForms 2.0 clients. Web Services are being looked at to be overhauled to become WCF services (and eventually tie into the "cloud" via .NET 4.0 relay services). The 2.1 Vista and server fix is in QA. The user wiki is growing with new content on a regular basis (feel free to toss your own in, it's a wiki eh). There's a Facebook group to talk about it swap ideas, stories, or bugs (if you're into that social thing) and some other happenings that I can't go public with yet but you'll see them in the new year.

Overall it's picking up steam and I hope you'll give it a looksee and feel free to poke, prod, question, and contribute!

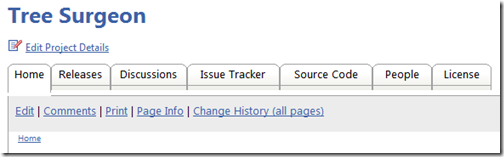

Tree Surgeon

No, I haven't abandoned my little Tree Surgeon buddy. In fact we're motoring ahead with 3.5 and looking at a few things to help aid in development. MEF is huge and I'm grateful we have awesome dudes out there like Glen Block pushing this stuff. The P&P group has been phenomenal the past couple of years (yes guys, I forgive you for Enterprise Library and the Database Application Block). Prism kicks butt and MEF is very cool. Not only the fact that it'll be included in the next framework release, but for a project like Tree Surgeon (where I've been toying with a Plugin architecture for awhile now) it fits like a glove. It took me watching Scott Hanselman's BabySmash for the Masses screencast from PDC08 to really "get" MEF but now that I do I'm looking at hooking it into Tree Surgeon. This should open up a lot of new possiblies for adding in or modifying how Tree Surgeon generates it's solutions. If you need more capabilties or even an entire directory structure change, it'll be just a plugin away.

SharePoint Forums and Knowledge Base Web Parts

The SharePoint monkey on my back that I can't seem to shake. I haven't done a proper release for over a year on my web parts and the SharePoint 2007 compatibility still isn't there. My bad. Need to get this done so trying to wrap up a nice Christmas package for all you little SharePoint geeks out there. Hopefully SantaBil will make the deadline of December 25th he's set for himself.

Stumbled across “Tiny Footprint” mode of the Task Manager today while I’m tracking down a nasty hijacker Smitfraud-C malware bot on my Jenn’s machine.

Thought for a minute I was in a different program or OS or something wrong was going on. Turns out if you double-click on the area outside the tabs on Task Manager it throws a hissy fit and jumps into what Microsoft dubs “Tiny Footprint” mode.

I don’t know what disturbs me more, the fact that this “mode” exists and is not very known, or that they have a KB article on it in case users “accidentally” get themselves into it.

What do 3 geeks do at 4AM in the parking lot of an IKEA in Calgary on a Saturday morning? Plot to overthrow Future Shop in the hopes of obtaining a rare shipment of WiiFits? Maybe. Test the cold-tolerance level of Dell laptops using Canadian Tire car batteries as their only source of power? Could be. Make cheap jokes about doing drag and drop presentations then have t-shirts made up to mock Microsoft employees?

Nah, they get together to drive to Edmonton and attend the Edmonton Code Camp silly.

That's what me and 2 of my very tired friends will be doing this Saturday (note, Tim Hortons will be our cuddly buddies come Saturday) as we head up to Edmonton, home of a hockey team that leaves their players quivering on the ice, questioning their sexuality.

I'll be presenting two fun-filled-uber-cool-extra-special-director-cut-edition-limited-time-only-before-they-go-back-into-the-vault sessions:

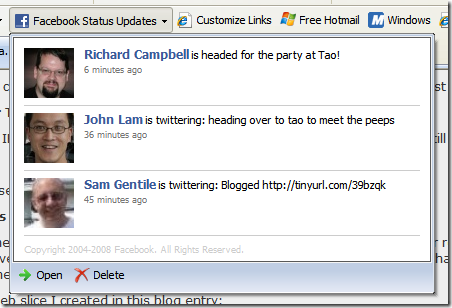

How to Win Friends and Influence People on Facebook, in .NET

Yes, building Facebook apps isn't only restricted to PHP and procedural code. We'll go through building apps using the newly released 2.0 Facebook Toolkit for .NET and talk about restrictions that social networking sites put on how much information you can (and cannot) farm out of their API. And yeah, we'll build some cool Facebook games too (in .NET).

Building Really Cool Apps with WPF

We'll do some cool stuff. It's a code camp. Bring yer laptops and fire up Visual Studio and we'll make XAML stand on it's head and do somersaults over any ORM anyday. jQuery? Bah. This is WPF baby and it your user experience doesn't get any richer than this.

My sessions are deemed a PowerPoint Free Zone to help make the planet a little nicer place to live in. Rock on big Al.

See you there!

I had to work on some code inside of a “real” *nix system recently so I though I would give everyone a visual walkthrough of setting up the operating system. I need to pave a new image so I figured I would just share with the rest of the class setting up a *nix system from scratch using Virtual PC. You can accomplish the same with VMWare, I just happen to be using Virtual PC for this.

For the OS I chose Debian. Actually I have several unix images for this type of work (Linux, NetBSD, etc.) but I’ve always liked Debian. It’s a slick text based install and works quite well, right down to the part of being able to select only what I need. I found the other Unixes to be cumbersome getting setup sometimes and not very visually appealing (installing NetBSD is like watching Justice Gray do his hair).

So here’s the visual walkthrough of creating your own Debian install using Virtual PC. This install was done with Debian 4.0R5.

Pre-Install

You’ll need a couple of things to get started. First your Virtual PC (or VMWare if you choose) to run the guest operating system. You’ll also need an ISO image of Debian. I used the 180mb netinst ISO image file to start. It’s larger than the 40mb version but the 40mb version doesn’t even include the base system so I saved the download by getting the larger image. You can get the image from this page here. Choose the i386 ISO from the first list of options on the page.

Once you’ve got the ISO ready you can setup your Virtual PC image and start. Here we go.

The Walkthrough

From Virtual PC, select the “New Virtual Machine Wizard” from the File Menu:

Click Next

Select “Create a virtual machine” from the options and click Next:

Give the virtual machine a name and optionally choose a location. I keep all my VMs on a portable USB drive:

Leave the operating system as “Other” and click Next:

You can adjust the ram if desired. I’m doing console development so 128mb of RAM is fine. If you want to install X-Windows or something then you might want to bump this up. It can be changed later so you can leave it for now and click Next:

Change the option to create a new virtual hard disk for you and click Next:

By default the new virtual hard disk is created where your virtual machine is created. This is fine and you can leave the default then click Next:

The confirmation screen will show you the options. Click Finish to create the new virtual machine. One more setting that you may need to change. Select the network adapter for the virtual machine to bridge to your host adapter. This allows Debian to obtain and IP and access the internet to download modules.

Your Virtual PC Console will show you the new virtual machine ready to start. Select it and click Start:

The new VM will boot but you need to capture the ISO image you downloaded earlier. Select CD from the menu and select Capture ISO Image:

Now browse to where your netimage ISO is located and select it then click Open:

From the Action menu in Virtual PC select Ctrl+Alt+Delete:

Debian will now boot. Press enter to continue:

A few hundred lines of gobbly-gook will flash by until you arrive at this screen. Pick your language and press enter:

Choose your country next and press enter:

Next choose a keyboard layout to use:

After a few minutes of loading screens you’ll be asked to provide a hostname for the system. Enter one and click continue:

By default the next screen will probably grab your ISP domain name but you can change it to whatever you want as this is going to be a local machine for testing. Enter a name and click continue:

More hardware detection and the disk partitioning starts. As this is a VM you can just select the first option and let Debian guide you through using the entire disk. Select that option and press enter:

Debian will find the 16gb hard drive the VM is showing (but it’s not really taking up 16gb on disk, well, not yet anyways). Select it and press enter:

The next option is how you configure the partitions. For *most* *nix installs I choose the 3rd option and split up my /home, /usr, and /var directories. However that’s usually on systems with multiple disks and again this is a test system right? Pick the first option to put all the files in a single partition and press enter:

You’ll be given one final chance to back out. Go ahead and press enter to write those changes:

Tee hee. Fooled ya. There’s one more confirmation. They *really* don’t want you to destroy data (like I did years ago and lost the contents of a book I was writing, tell you about that over beers one day). Select Yes and go for it.

Partitions get formatted, the world is a better place and we’re onto the next step. Congratulations! You’ve reformatted your hard drive (well, your virtual one). Select a time zone so Debian knows when to move clocks around on you:

Next is the all important “root” password. This is a test system so choose the uber-secure password of “password”.

And you’ll be asked to enter it again:

Next you’re asked to setup a real user (rather than using root). While this is a test system, it’s like logging into SQL Server using “sa” and kind of feels dirty. Besides in the unix world you can actually accomplish things as regular users without being nagged by a UAP dialog and a simple “su” command let’s you become “root” again. Enter the name of your real user here and press enter:

Next you’re asked for an account for the user. Give it a simple name (no spaces) that’s easy to remember. For the hackers reading this, I always use “bsimser”:

Enter a password for your new user. Again, I chose to use “password” feeling that nobody would be able to guess it (I also considered “love”, “sex”, “secret” and “god” but thought was too over the top):

Debian now installs the base system. Go for a coffee. It’ll be a few minutes. Go on. I’ll wait.

When you get back Debian will be asking you about mirrors. The netinst version has a base system, but it can (and should) leverage internet mirrors for files. It’ll do checks against the mirrors to get the latest versions and updated packages for you so it’s a good idea to say Yes to this option.

Once you’ve committed your soul to the installer, you’ll be asked to pick a mirror. Choose one in your own country to reduce the traffic sent around the world and press enter:

A list of mirror sites will come up based on your country choice (you did choose YOUR country right?). I’m not sure if the mirror list is sorted by access speed but whatever. Pick one as they’re all near you anyway. You might find a mirror doesn’t work during the install (nothing in life is guaranteed, including this walkthrough) so if you find it doesn’t work, Debian will bump you back to the mirror selection screen (this one). Pick another and try again. One of them is bound to work, eventually.

If you’re behind a firewall or proxy you need to let the package manager know this information in order for it to do your bidding. Enter it in the next screen:

Debian then downloads and configures the package selector. Next step is the dreaded “tasksel”. This is sort of Linux for Dummies where you tell it you want a desktop, or web server, or SQL server and it picks the packages for you. Frankly, I *always* avoid this step. I like to get a clean system up and running *then* decide what I need to install on it. Also, as this is a VM you can just copy your clean system and have lots of little VMs running around with different purposes. If you choose a configuration here you are on your own, I cannot follow you on that path. However I recommend deselecting everything here and pressing Continue. We can install packages later.

Next Debian will ask you if you want to install the GRUB boot loader. As this is the only OS on the system let GRUB do it’s thing and install to the master boot record.

That’s it for this part. Before you reboot, release the CD ISO image by choosing the option in the CD menu of Virtual PC. Now press Continue and hold your breath.

More gobbly-gook and you’ll be at your Debian login prompt. Login as your regular user you created previously:

If you’re really lonesome for that DOS feeling type ‘alias dir=”ls –l”’ at the command prompt after you login. You’ll be able to type “dir” now and it’ll look *kind of* like your old world.

Now we have a bare-bones system. You don’t have an ftp client or a telnet client or any developer tools! Let’s fix that.

All (most?) unix systems these days have some kind of packaging system that allows you to get all these extras. It’s like a big warehouse of software just waiting to install to your desktop. Debians is called APT. There’s an entire HOWTO here on it if you want to get all down and dirty with it. APT is your friend. Learn it, live it, love it.

For example type this at the command prompt:

su<ENTER>

Enter your root password

apt-get update<ENTER>

You should see something like this:

The contents will vary as these are from my mirror but basically it just updates the catalog of “stuff” you can get. Now (while still impersonating the root account) type this:

apt-get install ftp<ENTER>

You’ll see a result similar to this:

Remeber when Trinity needed a pilot program for a military M-109 helicopter and in a few seconds Tank downloaded it to her? Well, you can now type in “ftp my.favorite.porn.site” and get there instantly (or as fast as your internet connection will take you). Cool huh?

I prefer the APT command line but it takes some getting used to. There’s also a tool called “dselect”. While still running as root, type “dselect” and you’ll eventually get to a screen like this:

It let’s you pick a package to install, provides a description of it, and let’s you install it but just selecting it. Type it in using the apt tool or pick it using this one. Your choice.

That’s pretty much it kids. By now you have a fully working Debian image you can clone. Use the APT tool to install your favorite tools and get to work and hope this lengthy blog post filler helped.

Enjoy!

I'm a command line app junkie as I love working with it. I guess it's my DOS/Linux roots but I find things go faster when you're not dealing with a GUI and a mouse. Command line tools like NAnt and MSBuild have all sorts of options and syntax. Some of it discoverable, some of it not so much. NAnt for example will try to find a buildfile to run and execute it. It also will display the name and version of the app (which is useful in build logs so you know what's going on). There are other things like trying to find out how to run a command line tool. For example if you type "nant /?" you'll get this:

NAnt 0.86 (Build 0.86.3075.0; nightly; 02/06/2008)

Copyright (C) 2001-2008 Gerry Shaw

http://nant.sourceforge.netUnknown argument '/?'

Try 'nant -help' for more information

Entering the proper syntax of "nant -help" displays this:

NAnt 0.86 (Build 0.86.3075.0; nightly; 02/06/2008)

Copyright (C) 2001-2008 Gerry Shaw

http://nant.sourceforge.netNAnt comes with ABSOLUTELY NO WARRANTY.

This is free software, and you are welcome to redistribute it under certain

conditions set out by the GNU General Public License. A copy of the license

is available in the distribution package and from the NAnt web site.Usage : NAnt [options] <target> <target> ...

Options :-t[argetframework]:<text> Specifies the framework to target

-defaultframework:<text> Specifies the framework to target (Short format: /k)

-buildfile:<text> Use given buildfile (Short format: /f)

-v[erbose][+|-] Displays more information during build process

-debug[+|-] Displays debug information during build process-q[uiet][+|-] Displays only error or warning messages during

build process

-e[macs][+|-] Produce logging information without adornments

-find[+|-] Search parent directories for build file

-indent:<number> Indentation level of build output

-D:<name>=<value> Use value for given property

-logger:<text> Use given type as logger

-l[ogfile]:<filename> Use value as name of log output file

-listener:<text> Add an instance of class as a project listener

-ext[ension]:<text> Load NAnt extensions from the specified assembly

-projecthelp[+|-] Prints project help information

-nologo[+|-] Suppresses display of the logo banner

-h[elp][+|-] Prints this message

@<file> Insert command-line settings from a text file.A file ending in .build will be used if no buildfile is specified.

A lot of options there but pretty standard fare for a console application. And a lot of work to parse the options, validate them, display help messages, etc. I had a link to this thing called the Adaptive Console Framework sitting in my Action folder in Outlook and finally got around to looking at it. It's a library by Sunny Chen that takes the pain of command line junk by doing most of the heavy lifiting for you.

This is what the console framework provides for you. A nice, simple way of not having to write a lot of code to deal with complex command line options and something that gives you a few other benefits along the way like automatic help text generation and easy access to command line options. Notice that the syntax for displaying NAnt help was "nant -help" but it wouldn't allow variations like "nant /?" or "nant -?". The framework as we'll see let's us make it easy to just add variations to command line syntax without doing a lot of work.

The framework is a little gem of a library that I didn't think much about before but now after spending an entire hour of my hard earned time I think it's pretty slick. Here's a transformation of the console version of Tree Surgeon.

The old version of the Tree Surgeon console (betcha didn't even think there was one!) was a little boring and actually broken. If you ran it without any arguments you got this:

TreeSurgeon version 1.1

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, IncCreates a .NET Development tree

TreeSurgeon projectName

Please note - project name must not contain spaces. We recommend you use CamelCase for project names.

You could probably surmise you need to provide a project name at least. But what about those other options like version and what unit test framework to use? And frankly this is wrong since it's not version 1.1, this output was from the 2.0 version. Lots of little problems here.

Here's the source for the command line runner:

[STAThread]

private static int Main(string[] args)

{

try

{

return RunApp(args);

}

catch (Exception e)

{

Console.WriteLine("Unhandled Exception thrown. Details follow: ");

Console.WriteLine(e.Message);

Console.WriteLine(e.StackTrace);

return -1;

}

}

And here's the RunApp method:

private static int RunApp(string[] args)

{

Console.WriteLine("TreeSurgeon version 1.1");

Console.WriteLine("Copyright (C) 2007 - 2008 Bil Simser");

Console.WriteLine("Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc");

Console.WriteLine();

if (args.Length != 2)

{

Usage();

return -1;

}

Console.WriteLine("Starting Tree Generation for " + args[0]);

Console.WriteLine();

string outputDirectory = new TreeSurgeonFrontEnd(

Path.GetDirectoryName(Assembly.GetExecutingAssembly().Location), args[1]).

GenerateDevelopmentTree(args[0], "NUnit");

Console.WriteLine("Tree Generation complete. Files can be found at " + outputDirectory);

return 0;

}

RunApp would output the logo and copyright info and give you the ugly Usage() message (which wasn't too useful) if you didn't pass in at least a project name. You could pass in a version to build (2003, 2005, or 2008) but the unit test framework was hard coded to NUnit. Like I said, not too useful.

After taking a quick glance at what the Adaptive Console Framework (ACF) could do I decided to transform the Tree Surgeon console runner using it and see what we could get.

The ACF basically has two steps to it (this is overly simplifying it but you'll see it's pretty easy). First you make a slight adjustment to your main console application method, then you get down and dirty by creating option contracts (via attributes, classes, and properties). This has a big bonus that I immediately saw which was to move the command line options into a separate assembly and class which meant I could test it without actualy having to run the application and secondly it would take care of most of the heavy lifting of dealing with command line syntax.

So the first thing I did was to ditch that RunApp method and replace the call to have the ConsoleApplicationManager class from the ACF do my work. Here's the updated Main method from the Tree Surgeon console app:

[STAThread]

private static void Main(string[] args)

{

try

{

ConsoleApplicationManager.RunApplication(args);

}

catch (Exception e)

{

Console.WriteLine("Unhandled Exception thrown. Details follow:");

Console.WriteLine(e.Message);

Console.WriteLine(e.StackTrace);

}

}

Next I created a new assembly (called TreeSurgeonConsoleApplication.dll) and added an app.config file to the console app so the ACF could find my option contracts and added a reference to the ACF assembly. Here's the newly added app.config file:

<?xml version="1.0" encoding="utf-8" ?>

<configuration>

<configSections>

<section name="AdaptiveConsole"

type="AdaptiveConsole.Config.AdaptiveConsoleConfigHandler, AdaptiveConsole"/>

</configSections>

<AdaptiveConsole provider="TreeSurgeonConsoleApplication.TreeSurgeon, TreeSurgeonConsoleApplication"

contractRepository="TreeSurgeonConsoleApplication"/>

</configuration>

The app.config file just tells the ACF two things. The name and location of my console provider and the assembly where to find the option contracts. That was all I had to do in my TreeSurgeonConsole project so after removing the reference to the Core project (where the actual Tree Generation would happen) I closed down the console app project. Thinking about it, with the app.config file you could really use a generic console application project for *any* console app since there's nothing specific in here anymore. Nice.

The console provider is a class derived from ConsoleApplicationBase in the ACF and has two string overrides you provide, a logo and a description. Here's the TreeSurgeon class that we just specified in our app.config file:

public class TreeSurgeon : ConsoleApplicationBase

{

public TreeSurgeon(string[] args) : base(args)

{

}

protected override string Logo

{

get

{

var sb = new StringBuilder();

sb.AppendFormat("TreeSurgeon version 2.0{0}", Environment.NewLine);

sb.AppendFormat("Copyright (C) 2007 - 2008 Bil Simser{0}", Environment.NewLine);

sb.Append("Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.");

return sb.ToString();

}

}

protected override string Description

{

get { return "Creates a .NET development tree"; }

}

}

We're emulating part of the old RunApp method here. When I run the console app now I get this:

TreeSurgeon version 2.0

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.Creates a .NET development tree

Looks pretty much the same however like I said, I can now test the TreeSurgeon class (for example make sure the logo is set correctly because I might decide down the road to make the property a little more dynamic like fetching values using Reflection). I'm also not actually running anything yet so if I was building my app using TDD this fits nicely with that approach.

That's it for this part of the conversion but like I said, I don't have it running my TreeSurgeonFrontEnd class yet or generating the development tree or verifying the command line or displaying help. That now comes with our options.

With the ACF you define your command line options through something called "Option Contracts". There are four types in the ACF: None, Exact, Patternized, and Free. For Tree Surgeon I want the user to be able to run the application using these options:

We'll only look at the None Contract and the Patternized contract types.

The None Contract is a class that you inherit from OptionContractBase. It will be executed if the user provides no command line arguments to the application. Create a class that derives from OptionContractBase in your contract assembly. Here's the None contract for Tree Surgeon:

[OptionContract(

Type = ContractType.None,

Description = "Prints the help information on the screen.")]

public class TreeSurgeonEmptyContract : OptionContractBase

{

public override void Execute(

ConsoleApplicationBase consoleApplication,

IList<ArgumentInfo> args)

{

consoleApplication.PrintHelpMessage();

}

}

The class is decorated with an OptionContractAttribute that let's you specify the type of contract (None, Exact, Free, Patternized) and a description. Note we haven't done anything anywhere else in the system (the app.config file is done, the console Main method is done, and the ConsoleApplicationBase class is baked). All we're doing is adding a new class to the assembly we specified as our contractRepository in our app.config file.

Here's the output of the app now when no arguments are passed to it:

TreeSurgeon version 2.0

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.Creates a .NET development tree

> Calling the application without arguments

Prints the help information on the screen.

Sweet. Now let's start adding our options for actually running the app.

We'll add a new class called TreeSurgeonCommandsContract (again derived from OptionContractBase). This time rather than specifying the type as "None" we'll use "Patternized". The Patternized type is a contract type where your console application requires a complex command line argument. You can define the options that are mandatory or not within the contract, you can define the options that carry a list of values and you can even define the switches in the patternized contracts. here's our TreeSurgeonCommandsContract class:

[OptionContract(

Type = ContractType.Patternized,

Description = "Generates a new .NET development tree for a given project name.")]

public class TreeSurgeonCommandsContract : OptionContractBase

The main thing we need to capture is the project name that we want to generate the tree for. We'll do this by creating a property (called ProjectName) and decorating it with the OptionAttribute:

[Option(

Type = OptionType.SingleValue,

Name = "/p;/project",

Required = true,

Description = "Specifies the project name.\r\n\t" +

"Please note - project name must not contain spaces.\r\n\t" +

"We recommend you use CamelCase for project names.")]

public string ProjectName { get; set; }

This tells the ACF that a) this option has a single value b) it's specified by either "/p:" or "/project:" and c) it's required. There's also a description we provide which will be displayed in our output that looks like this now:

TreeSurgeon version 2.0

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.Creates a .NET development tree

TreeSurgeonConsole.exe </p|/project:>

> Calling the application without arguments

Prints the help information on the screen.> Generates a new .NET development tree for a given project name.

/p|/project:value (required):

Specifies the project name.

Please note - project name must not contain spaces.

We recommend you use CamelCase for project names.

Notice that we now have the application name (TreeSurgeonConsole.exe) along with a required property. And the help is displayed for that property. Again, pretty damn simple so far. At this point we could actually implement the required Execute method on the TreeSurgeonCommandsContract class and call out to our TreeSurgeonFrontEnd, passing it the ProjectName property. We would generate a developement tree just like the original system and we're done. However we're only about 20 minutes into our conversion so we can do a lot more.

First we'll add a property to specify the version of the development tree we want to generate. This is again just a string property in our TreeSurgeonCommandsContract class decorated with the OptionAttribute. We'll make this optional and provide a default value for it along with instructions:

[Option(

Type = OptionType.SingleValue,

Name = "/v;/version",

Required = false,

Default = "2008",

Description = "Specifies the Visual Studio version to generate.\r\n\t" +

"Valid options are: \"2003\", \"2005\", or \"2008\"\r\n\t" +

"Default is \"2008\"")]

public string Version { get; set; }

Then we'll do the same for our UnitTestFramework we want to specify (NUnit or MbUnit):

[Option(

Type = OptionType.SingleValue,

Name = "/t;/test",

Required = false,

Default = "NUnit",

CaseSensitive = true,

Description = "Specifies the Unit Test framework to use when generating the tree.\r\n\t" +

"Valid options are: \"NUnit\", or \"MbUnit\"\r\n\t" +

"Default is \"NUnit\"")]

public string UnitTestFramework { get; set; }

Now we can run our app and see the help the ACF is providing:

TreeSurgeon version 2.0

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.Creates a .NET development tree

TreeSurgeonConsole.exe </p|/project:> [/v|/version:] [/t|/test:]

> Calling the application without arguments

Prints the help information on the screen.> Generates a new .NET development tree for a given project name.

/p|/project:value (required):

Specifies the project name.

Please note - project name must not contain spaces.

We recommend you use CamelCase for project names./v|/version:value :

Specifies the Visual Studio version to generate.

Valid options are: "2003", "2005", or "2008"

Default is "2008"/t|/test:value :

Specifies the Unit Test framework to use when generating the tree.

Valid options are: "NUnit", or "MbUnit"

Default is "NUnit"

Lots of great stuff here and all we've done was specify some attributes around a few properties. What I really like are a few things we got for free:

Now we'll actually implement the code to run our generator and use whatever values you pass along in the command line.

To get the framework to do our bidding, we implement the Execute method in our TreeSurgeonCommandsContract class. This method passes in a copy of the ConsoleApplicationBase class (we specified above as TreeSurgeon) and an IList of ArgumentInfo values which were passed into the application. This is more than just a string so we can get information from our arguments like what type of argument they are.

For Tree Surgeon, we need at least one option (the project name). We'll use a little LINQ to get the list of options from our passed in parameter and check to make sure that a) we have at least 1 option and b) we have a project name:

var options = from arg in args

where arg.Type == ArgumentType.Option

select arg;

if(options.Count() < 1 || string.IsNullOrEmpty(ProjectName))

{

consoleApplication.PrintHelpMessage();

return;

}

Now that we've got a valid command line we'll reproduce what our old RunApp method did, namely invoke the TreeSurgeonFrontEnd class which will generate our development tree for us. We'll make it a little more interesting than version 1.1 and print out a little more information on what options we're using to generate the tree. Here's our Execute method so far:

public override void Execute(ConsoleApplicationBase consoleApplication, IList<ArgumentInfo> args)

{

var options = from arg in args

where arg.Type == ArgumentType.Option

select arg;

if(options.Count() < 1 || string.IsNullOrEmpty(ProjectName))

{

consoleApplication.PrintHelpMessage();

return;

}

consoleApplication.PrintLogo();

Console.WriteLine("Starting Tree Generation{0}", Environment.NewLine);

Console.WriteLine(" Project Name: \"{0}\"", ProjectName);

Console.WriteLine(" Version: \"{0}\"", Version);

Console.WriteLine("Unit Test Framework: \"{0}\"", UnitTestFramework);

Console.WriteLine();

var frontEnd = new TreeSurgeonFrontEnd(Path.GetDirectoryName(Assembly.GetExecutingAssembly().Location), Version);

var outputDirectory = frontEnd.GenerateDevelopmentTree(ProjectName, UnitTestFramework);

Console.WriteLine("Tree Generation complete.{0}{0}Files can be found at:{0}\"{1}\"", Environment.NewLine, outputDirectory);

}

And here's the output using the command line "treesurgeonconsole.exe /p:test":

TreeSurgeon version 2.0

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.Creates a .NET development tree

Starting Tree Generation

Project Name: "test"

Version: "2008"

Unit Test Framework: "NUnit"Tree Generation complete.

Files can be found at:

"C:\Documents and Settings\simserb\My Documents\TreeSurgeon\test"

Wait! We're only 45 minutes into our conversion and there's more features we can take on. Most apps let you turn off the silly logo/copyright info (usually with a "/nologo" switch). The ACF has a nice feature to specify switches on properties. You just add a boolean property to your class and decorate accordingly. Here's our "/nologo" switch:

[Option(

Type = OptionType.Switch,

Name = "/nologo",

Description = "When turned on, the logo and description\r\n\t" +

"information will not be displayed.")]

public bool NoLogo { get; set; }

Now that we have a bool property if the user adds "/nologo" to the command line we should not print out the header info:

if(!NoLogo)

{

consoleApplication.PrintLogo();

}

Finally one last thing before we're done. A bug in the old system was that if you tried to generate a new tree over top of an existing directory, it would bomb out with something like this:

TreeSurgeon version 1.1

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, IncStarting Tree Generation for test

Unhandled Exception thrown. Details follow:

Can't generate directory [C:\Documents and Settings\simserb\My Documents\TreeSurgeon\test] since it already exists on disk. Wait until a later version, or delete the existing directory!

at ThoughtWorks.TreeSurgeon.Core.SimpleDirectoryBuilder.CreateDirectory(String directoryName) in C:\Development\TreeSurgeon-2_0_0_0.source\src\Core\SimpleDirectoryBuilder.cs:line 12

at ThoughtWorks.TreeSurgeon.Core.TreeSurgeonFrontEnd.GenerateDevelopmentTree(String projectName, String unitTestName) in C:\Development\TreeSurgeon-2_0_0_0.source\src\Core\TreeSurgeonFrontEnd.cs:line 42

at ThoughtWorks.TreeSurgeon.TreeSurgeonConsole.TreeSurgeonConsoleMain.RunApp(String[] args) in C:\Development\TreeSurgeon-2_0_0_0.source\src\TreeSurgeonConsole\TreeSurgeonConsoleMain.cs:line 44

at ThoughtWorks.TreeSurgeon.TreeSurgeonConsole.TreeSurgeonConsoleMain.Main(String[] args) in C:\Development\TreeSurgeon-2_0_0_0.source\src\TreeSurgeonConsole\TreeSurgeonConsoleMain.cs:line 15

Highly useful. Let's add a new feature to our command line, an "/overwrite" swtich. It'll be just like the "/nologo" switch except that if it's specified, we'll delete the directory before we generate the tree:

[Option(

Type = OptionType.Switch,

Name = "/overwrite",

Description = "When turned on, any project with the same name\r\n\t" +

"will be deleted.")]

public bool Overwrite { get; set; }

And here's the updated tree generation code with the check to see if we should delete the output directory first:

var frontEnd = new TreeSurgeonFrontEnd(Path.GetDirectoryName(Assembly.GetExecutingAssembly().Location), Version);

if (Overwrite)

{

Directory.Delete(frontEnd.GetOutputPath(ProjectName), true);

}

var outputDirectory = frontEnd.GenerateDevelopmentTree(ProjectName, UnitTestFramework);

Console.WriteLine("Tree Generation complete.{0}{0}Files can be found at:{0}\"{1}\"", Environment.NewLine, outputDirectory);

That's it! In under 60 minutes we were able to totally transform the command line tool into something a little more robust and testable (and even add a new feature to fix an old bug). Now when we run the Tree Surgeon console app we get a rich descriptive help screen:

TreeSurgeon version 2.0

Copyright (C) 2007 - 2008 Bil Simser

Copyright (C) 2005 - 2006 Mike Roberts, ThoughtWorks, Inc.Creates a .NET development tree

TreeSurgeonConsole.exe </p|/project:> [/v|/version:] [/nologo] [/overwrite] [/t|/test:]

> Calling the application without arguments

Prints the help information on the screen.> Generates a new .NET development tree for a given project name.

/p|/project:value (required):

Specifies the project name.

Please note - project name must not contain spaces.

We recommend you use CamelCase for project names./v|/version:value :

Specifies the Visual Studio version to generate.

Valid options are: "2003", "2005", or "2008"

Default is "2008"[/nologo]:

When turned on, the logo and description

information will not be displayed.[/overwrite]:

When turned on, any project with the same name

will be deleted./t|/test:value :

Specifies the Unit Test framework to use when generating the tree.

Valid options are: "NUnit", or "MbUnit"

Default is "NUnit"

A few benefits I got from this conversion:

So, if you've got a console application sitting around you might want to give the ACF a spin and try it out. Or if you're building a new app take a look at it. It was low impact and high value for my investment and gave me a better end result that's now testable and easy to extend. You might find it useful like I did. Many thanks to Sunny Chen for putting this library together, it's a great tool.

Enjoy!

Trying out a new look. Changed the theme on the blog and created a custom header. The header is an image captured from Wordle which is a pretty neat tool. Give it a bunch of text or point it at a feed and you get a jumble of words. I pointed it at my own feed as it seemed appropriate and it spit out this. Anyways, hope you like it. I think I needed a change from the Marvin3 theme I've had for the past few years.

Sometimes you need a column in your database to automatically increment (like an identity column) in order to provide back to a user say a confirmation number (when an item is added to that table). In NHibernate there's no way to specify this kind of behavior with normal mappings because the column in the Id tag has to be the primary key. Here's a technique we used to do this.

Let's say we have a ticketing system (like TicketMaster) and it's going to give back the user a confirmation number after adding their request. The TicketRequest table ID is keyed off of a Guid but we can't provide that back to the user so we need an additional property called ConfirmationNumber (plus we don't want to expose ID fields to the users).

Specify your table mappings like so:

<class name="TicketRequest" lazy="false">

<id name="Id" type="guid.comb">

<generator class="assigned"/>

</id>

<property name="ConfirmationNumber" generated="insert" insert="false" update="false">

</class>

Then in the same mapping file change the ConfirmationNumber column on creation to an identity column using the <database-object> tag:

<database-object>

<create>

ALTER TABLE TicketRequest DROP COLUMN ConfirmationNumber

ALTER TABLE TicketRequest ADD ConfirmationNumber INT IDENTITY

</create>

</database-object>

There you have it. When you insert your record, you'll be able to use a Guid ID field but provide back an auto-incrementing field to your users.

Hope that helps.

When you launch a XBAP application you can sometimes stumble over the XBAP error page which might look something like this:

Here's a WPF user control that you can drop into any application that simulates the error icon you see. It's a simple Canvas control with two Grids and uses Paths and Elipses to define the graphical look.

First create a new WPF User Control in your project or a library. Name it whatever you like, I called mine ErrorIcon. It'll be a type derived from UserControl so go into the XAML and change this to Canvas and also update the ErrorIcon.xaml.cs class to derive from Canvas instead of UserControl.

<Canvas x:Class="WpfApplication1.UserControl1"

xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml"

Height="300" Width="300">

<Grid>

</Grid>

</Canvas>

namespace WpfApplication1

{

/// <summary>

/// Interaction logic for UserControl1.xaml

/// </summary>

public partial class UserControl1 : Canvas

{

public UserControl1()

{

InitializeComponent();

}

}

}

Now drop in the following XAML code into your newly created Canvas:

<Canvas x:Class="WpfApplication1.ErrorIcon" xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation" xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml" x:Uid="ErrorCanvas" Margin="0,3,0,0" Width="44">

<Grid Name="RedWarning" x:Uid="RedWarning" Width="44" Height="44" Visibility="Visible">

<Ellipse x:Uid="Ellipse_1">

<Ellipse.Fill>

<LinearGradientBrush x:Uid="LinearGradientBrush_14" StartPoint="0,0" EndPoint="0,1">

<LinearGradientBrush.GradientStops>

<GradientStopCollection x:Uid="GradientStopCollection_4">

<GradientStop x:Uid="GradientStop_32" Color="OrangeRed" Offset="0" />

<GradientStop x:Uid="GradientStop_33" Color="DarkRed" Offset="1" />

</GradientStopCollection>

</LinearGradientBrush.GradientStops>

</LinearGradientBrush>

</Ellipse.Fill>

<Ellipse.Stroke>

<LinearGradientBrush x:Uid="LinearGradientBrush_15" StartPoint="0,0" EndPoint="0,1">

<LinearGradientBrush.GradientStops>

<GradientStopCollection x:Uid="GradientStopCollection_5">

<GradientStop x:Uid="GradientStop_34" Color="transparent" Offset="0" />

<GradientStop x:Uid="GradientStop_35" Color="#44ffffff" Offset="1" />

</GradientStopCollection>

</LinearGradientBrush.GradientStops>

</LinearGradientBrush>

</Ellipse.Stroke>

</Ellipse>

<Ellipse x:Uid="Ellipse_2" Opacity="0.5" Stroke="Transparent" Margin="1">

<Ellipse.Fill>

<LinearGradientBrush x:Uid="LinearGradientBrush_16" StartPoint="0,0" EndPoint="0,1">

<LinearGradientBrush.GradientStops>

<GradientStopCollection x:Uid="GradientStopCollection_6">

<GradientStop x:Uid="GradientStop_36" Color="white" Offset="0" />

<GradientStop x:Uid="GradientStop_37" Color="transparent" Offset="1" />

</GradientStopCollection>

</LinearGradientBrush.GradientStops>

</LinearGradientBrush>

</Ellipse.Fill>

</Ellipse>

<Path x:Uid="Path_1" Stretch="Fill" Width="19.878" Height="19.878" StrokeThickness="5" Stroke="#FFFFFFFF" StrokeStartLineCap="Round" StrokeEndLineCap="Round" Data="M 200,0 L 0,200 M 0,0 L 200,200" />

</Grid>

<Grid x:Uid="RedReflection" Width="44" Height="44" Visibility="Visible" Canvas.Top="80" Canvas.Left="0">

<Grid.OpacityMask>

<LinearGradientBrush x:Uid="LinearGradientBrush_20" StartPoint="0,1" EndPoint="0,0">

<GradientStop x:Uid="GradientStop_44" Offset="0" Color="#3000" />

<GradientStop x:Uid="GradientStop_45" Offset="0.9" Color="Transparent" />

</LinearGradientBrush>

</Grid.OpacityMask>

<Grid.RenderTransform>

<ScaleTransform x:Uid="ScaleTransform_2" ScaleX="1" ScaleY="-0.85" />

</Grid.RenderTransform>

<Ellipse x:Uid="Ellipse_3">

<Ellipse.Fill>

<LinearGradientBrush x:Uid="LinearGradientBrush_17" StartPoint="0,0" EndPoint="0,1">

<LinearGradientBrush.GradientStops>

<GradientStopCollection x:Uid="GradientStopCollection_7">

<GradientStop x:Uid="GradientStop_38" Color="OrangeRed" Offset="0" />

<GradientStop x:Uid="GradientStop_39" Color="DarkRed" Offset="1" />

</GradientStopCollection>

</LinearGradientBrush.GradientStops>

</LinearGradientBrush>

</Ellipse.Fill>

<Ellipse.Stroke>

<LinearGradientBrush x:Uid="LinearGradientBrush_18" StartPoint="0,0" EndPoint="0,1">

<LinearGradientBrush.GradientStops>

<GradientStopCollection x:Uid="GradientStopCollection_8">

<GradientStop x:Uid="GradientStop_40" Color="transparent" Offset="0" />

<GradientStop x:Uid="GradientStop_41" Color="#44ffffff" Offset="1" />

</GradientStopCollection>

</LinearGradientBrush.GradientStops>

</LinearGradientBrush>

</Ellipse.Stroke>

</Ellipse>

<Ellipse x:Uid="Ellipse_4" Opacity="0.5" Stroke="Transparent" Margin="1">

<Ellipse.Fill>

<LinearGradientBrush x:Uid="LinearGradientBrush_19" StartPoint="0,0" EndPoint="0,1">

<LinearGradientBrush.GradientStops>

<GradientStopCollection x:Uid="GradientStopCollection_9">

<GradientStop x:Uid="GradientStop_42" Color="white" Offset="0" />

<GradientStop x:Uid="GradientStop_43" Color="transparent" Offset="1" />

</GradientStopCollection>

</LinearGradientBrush.GradientStops>

</LinearGradientBrush>

</Ellipse.Fill>

</Ellipse>

<Path x:Uid="Path_2" Stretch="Fill" Width="19.878" Height="19.878" StrokeThickness="5" Stroke="#FFFFFFFF" StrokeStartLineCap="Round" StrokeEndLineCap="Round" Data="M 200,0 L 0,200 M 0,0 L 200,200" />

</Grid>

</Canvas>

Bam! You've got an error icon that looks like this:

![]()

To use it, just take any surface and add in a reference to the custom Canvas. Here it is in a pseudo-MessageBox like window:

Enjoy!

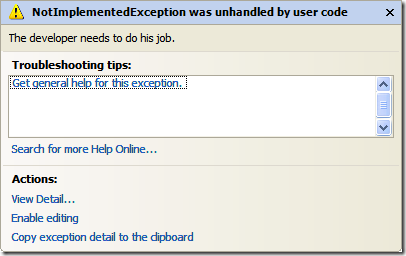

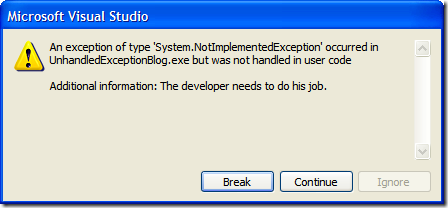

In your own applications you'll generally want a "catch-all" handler that will take care of unhandled exceptions. In WinForms apps this is done by creating an unhandled exception delegate and (optionally) creating an AppDomain unhandled exception handler. Peter Bromberg has a good article on all of this here and I wrote about the various options for WinForms apps here.

With XBAP (XAML Browser Applications) the rules are slightly different so here's one way to do it.

Take your existing XBAP app (or create a new one) and in the App.xaml.cs file you'll want to create a new event handler for unhandled exceptions. You can do this in the Startup method like so:

protected override void OnStartup(StartupEventArgs e)

{

DispatcherUnhandledException += App_DispatcherUnhandledException;

base.OnStartup(e);

}

In our exception handler, we'll do two things. First we'll set the exception to be handled and then we'll set the content of the MainWindow (a property of the Application class) to be a new exception handler page.

private void App_DispatcherUnhandledException(object sender, DispatcherUnhandledExceptionEventArgs e)

{

e.Handled = true;

MainWindow.Content = new ExceptionHandlerPage();

}

That's really the basics and works. However you don't have the exception information passing onto the new page. We can do something simple for now. Here's the XAML for a simple error handling page:

<Page x:Class="XbapExceptionHandlerSpike.ExceptionHandlerPage"

xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml"

Width="300" Height="300"

Title="ExceptionHandlerPage">

<Grid>

<Grid.RowDefinitions>

<RowDefinition Height="23" />

<RowDefinition />

</Grid.RowDefinitions>

<TextBlock Margin="10,0,0,0" VerticalAlignment="Center" Grid.Row="0" Text="An exception has occured. Here are the details:" />

<TextBlock Margin="10,0,0,0" Grid.Row="1" x:Name="ErrorInformation" Foreground="Red" FontFamily="Consolas" TextWrapping="Wrap" />

</Grid>

</Page>

I gave a name to the TextBlock in the second row in the grid. This is the control that will display our error message. I've also styled it and set the font to be a mono-spaced font.

We can update our creation of the ExceptionHandlerPage class to include the exception details like so:

private void App_DispatcherUnhandledException(object sender, DispatcherUnhandledExceptionEventArgs e)

{

e.Handled = true;

var page = new ExceptionHandlerPage

{

ErrorInformation = {Text = e.Exception.Message}

};

MainWindow.Content = page;

}

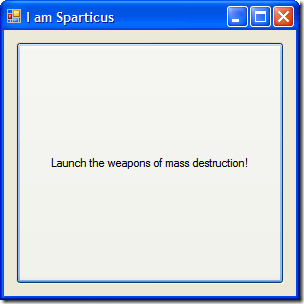

Now our page displays the error with the details provided:

Again, this is really simple and bare-bones. You can get creative with it with things like fancy fonts, dancing bears, floating borders, etc. and passing along the entire Exception (so you might walk through the inner exceptions and details) and even log the exception to your bug tracking system. Jeff Atwood has a great article on creating friendly custom Exception Handlers (one for WinForms here, one for ASP.NET here). A WPF version might be useful.

As with WPF apps, there are a lot of ways to skin this cat. This is just my take on it. Feel free to offer your own twist.

James Kovacs and I had the pleasure of presenting to a well fed crowd of about 150 crazed developers and IT folk at Shaw here in Calgary last night. We did a drive by discussion of Prism. I say drive by because we really just scratched the surface of what Composite Applications are all about (you can only do so much in 40 minutes on the subject) but we crammed in what we could. I hope it was a good intro to everyone and encourage you to head out and get Prism'd and see what the Patterns and Practices group has put together, it's a really great framework for building WPF based composite apps.

Thanks again to everyone who came out (even if it was for the food) and I had a blast presenting the content with James to you guys. Here's a copy of the slide deck if you're interested and here are a few key links from it:

Thanks!

You know there are things in life that you never notice or worry about. This is one of them.

The MessageBox API in Windows Forms allows you to specify message box icons to display along side your all-important message you're communicating to the user (System Error, Hard Drive Full, Your Cat is Pregnant, that sort of thing). Icons can help add a little punch to an otherwise drab afternoon staring at rows and rows of spreadsheets and overdue time cards.

The 9 options you can select from to kick your otherwise drab battleship gray business application up are: Asterix, Error, Exclamation, Hand, Information, Question, Stop, Warning, and None. None of course presents no icon. The others provide you with an icon that matches the description. Or does it?

Here's the Asterix option:

![]()

And here's the Information option:

Hmmm... something isn't right here. Am I seeing double? No, both options use the same icon.

Onto the Exclamation icon:

And the Warning one:

Hey! You're copying and pasting the same image!

No friends, I really did write a program to do all this (contact me for licensing and source code rights) and they really are different.

Finally here's the Error, Hand, and Stop icons (all conveniently wrapped up in one picture):

Yup. All three use the red 'X' icon. You would think, oh I don't know, the Hand would display a Hand icon and Stop might, oh what the heck I'll take a stab at this, display a Stop sign. Now I'm all for saving on resources. After all, icons cost money to make; take up valuable bytes in the CLR; and lord knows how many hours of debate over the right shade of yellow for the Warning icon was spent. However if you're going to provide a programmer 9 different options (which all seem reasonable and unique) then why would you only provide 4 icons (I neglected to show the Question icon which is indeed a question mark). Did the MessageBox team have their budget cut or something?

In any case, this seemingly random blog entry has been brought to you by the letters M, M, and M and the number M.

Today they launch the National Do Not Call list in Canada, a bill that was passed 3 years ago but it's taken this long to build the service (guess they don't practice Agile in their software delivery process). From the looks of the service you might be jumping for joy thinking all those annoying calls at dinner time will stop. Think again.

I went to register my number with the National Do Not Call list but I’m pretty skeptical that it’s of any value. There are a whack of exclusions:

Hmmm. Doesn't leave much left does it?

In addition, apparently it costs money for the telemarketers to subscribe to the list. It’s not clear that if they don’t subscribe to the list they can/cannot call you. All it says in the rules are that “Telemarketers and Clients of Telemarketers must subscribe to the National DNCL and pay any applicable application fees”.

I suppose it will reduce the number of “cold call” telemarketers that will call you, but I’m suspicious that it’ll really reduce much. Looking at the exclusion list, basically there’s very few organizations that will fit into the non-exclusion list and are open to interrupt your dinner time (or quality Halo 3 time).

For the most part, I get called by my own bank and credit card companies (offering me extra insurance or whatever the flavor of the day is). According to the rules since I do business with them, unless I tell them to put me on their internal DNC list, they’ll still continue to call me with their offers I can’t be bothered with. That’s if they even have an internal DNC list and there’s no legislation that requires them to.

Some people are welcoming the list, I just have doubts that it'll do much good. I agree that it's a good thing but there are too many restrictions, rules, and loopholes to make it really value-add to the consumer. True, you get off those cold-call lists from unknown telemarketers but in my experience I get more calls from business that I work with (banks, etc.) than unknown telemarketers and they're excluded (as is newspaper/magazine subscription calls which I get a lot of those too).

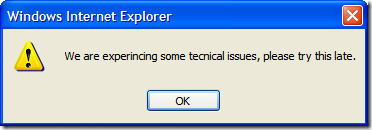

BTW, I tried to register my number but it took me to a page that simply said:

The service is not available. Please try again later.

I guess they didn’t figure anyone would actually use it or maybe the webserver just fell over and nobody cares.

Brilliant.

I'm presenting a talk around Terrarium development at the Edmonton .NET User Group on September 25th. The talk is focused on upgrading a legacy app (1.1) to 2.0 (and beyond to 3.5 eventually), building and running your own Terrarium (complete with man-eating critters), and the future roadmap.

Here's the session abstract:

Terrarium was created by members of the .NET Framework team in the .NET Framework 1.0 timeframe and was used initially as an internal test application. In Terrarium, you can create herbivores, carnivores, or plants and then introduce them into a peer-to-peer, networked ecosystem where they complete for survival. Terrarium demonstrates some of the features of the .NET Framework, including Windows Forms integration with DirectX; XML Web services; support for peer-to-peer networking; support for multiple programming languages; the capability to update smart client, or Windows-based, applications via a remote Web server; and the evidence-based and code access security infrastructure. This session is to explore the newly open sourced tool and talk about aspects and challenges around porting the 1.1 code to 2.0, introducing new framework features, updating the architecture. As well, we’ll look at building new creatures to introduce to your terrarium; how the entire eco-system works from a developers perspective, and the future roadmap where the Terrarium community is going.

I'll also be presenting the same session to the Calgary .NET User Group, we're just finalizing a date. See you there!

Update: The Calgary .NET User Group presentation is confirmed for October 1st. Details can be found here on their site. The talk will be titled "The interaction of feeding and mating in the software development control of a Terrarium".

Great news for Canadians! No, we haven't discovered a new source of unlimited clean-burning fuel and Stephen Harper is still our Prime Minister (for now).

Microsoft Canada has put together an awesome road show and it's coming soon. This is very much a mini-TechEd style conference but with a few twists. First off, it's Canadian based and will be hitting the major cities over the next couple of months. Second, some of the content is delivered by local freaks (such as myself) rather than the same old canned presentations by MSFT speakers. Don't get me wrong, the Canadian Developer support team (John Bristowe, Jean-Luc David, et. al.) are great but hey everyone has seen and heard them over and over again (and frankly Bristowes drag-n-drop sessions make me want to hurl). Now we can grab some premium air time and talk from the hip.

Unlike the previous road show launches and sessions, this is a paid event. Wait, stick around. Okay, I understand and hear ya. Why buy the milk when I can get the cow for free? Here's some factoids to make your want to rush out and buy your Donald Belcham Secret Decoder Ring (and optional Justice Gray Hair Tonic Revitalizer). Rather than a single day, the event is spread out over 2 days (you can choose to attend a single day or both, your choice). In addition, there are over 30 technical sessions all on new technology (nothing old and crappy here, well maybe some old-ish stuff but not crappy) including Windows and Web development, Virtualization, and Infrastructure. There are also "birds-of-a-feather" type sessions and some after party geek fests going on. All in all, a pretty slick way to kill off two days in cold, cold winter (and that means you Winnipeg!).

The two day conference is happening in the larger cities (Toronto, Montreal, Calgary, and Vancouver) with the one day conference happening in the less fortunate ones (Winnipeg, Halifax and Ottawa). The early bird price (before October 15) for one day is $124.99 or $249.99 for both days. After October 15th the price goes up to double at $249.99 and $499.99 respectively (so obviously if money is a concern I suggest you get in before October 15th). Space *is* limited to 5000 people so don't wait to sit out on this one.

Swag? Did I mention the swag? Any conference worth it's salt needs swag and this one is no exception. Each attendee gets the following goodies:

More? Oh yeah, I mentioned the presentations. I'm planning on presenting a whack of talks on WPF covering (Databinding, WinForms integration, CompositeWPF, LOB apps, etc.) so that should be fun. This is tentative as I haven't got the final word yet to take the stage (and after reading this blog post I may not be allowed to show up), but whatever happens I promise a) lots of code, no fluff b) flying monkeys c) Terrarium, Terrarium, Terrarium d) no concealed lizards or logging chains of any kind e) comic book cross-overs and f) did I mention the code? Beat those promise Mr. Harper!

The TechDays website will be online soon with more details and registration info. You can find that here. You can also check out D'Arcys twisted take on this here, and Miguel's more calmer preview here.

See you there!

I was trying to deploy a new WPF app via XBAP today and we were experiencing an odd deployment error.

When we deployed and launched the app we were getting this error:

Startup URI: http://localhost/XbapNHibernateDeploymentSpike/XbapNHibernateDeploymentSpike.xbap

Application Identity: http://localhost/XbapNHibernateDeploymentSpike/XbapNHibernateDeploymentSpike.xbap#XbapNHibernateDeploymentSpike.xbap, Version=1.0.0.5, Culture=neutral, PublicKeyToken=8c4ee06d2506bc6f, processorArchitecture=msil/XbapNHibernateDeploymentSpike.exe, Version=1.0.0.5, Culture=neutral, PublicKeyToken=8c4ee06d2506bc6f, processorArchitecture=msil, type=win32

System.Runtime.Serialization.SerializationException: Unable to find assembly 'NHibernate, Version=2.0.0.4000, Culture=neutral, PublicKeyToken=aa95f207798dfdb4'.

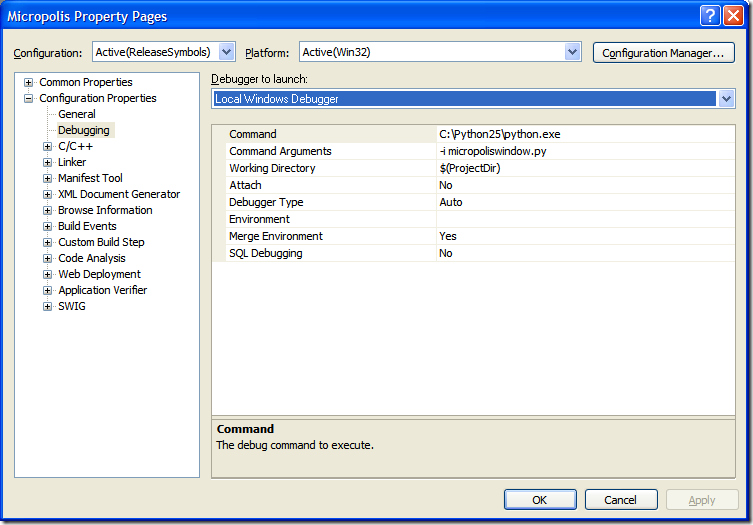

This was odd because I knew that NHibernate.dll was being distributed with the app. I confirmed. There it was in the manifest file and in the deployment directory. Checking the Application Files settings inside of Visual Studio (this was just a test deployment, please don't deploy apps from inside Visual Studio) it was there and included:

The generated manifest file showed it was included as well:

<dependency>

<dependentAssembly dependencyType="install" allowDelayedBinding="true" codebase="NHibernate.dll" size="1638400">

<assemblyIdentity name="NHibernate" version="2.0.0.4000" publicKeyToken="AA95F207798DFDB4" language="neutral" processorArchitecture="msil" />

<hash>

<dsig:Transforms>

<dsig:Transform Algorithm="urn:schemas-microsoft-com:HashTransforms.Identity" />

</dsig:Transforms>

<dsig:DigestMethod Algorithm="http://www.w3.org/2000/09/xmldsig#sha1" />

<dsig:DigestValue>hUljboZ3kBAzBFbanjzLCJCMua0=</dsig:DigestValue>

</hash>

</dependentAssembly>

</dependency>

This didn’t make any sense. It was in the manifest, in the published directory, and everything looked like it should work. Google wasn’t much help here as I was in uncharted territory so I just starting poking around.

Finally I came to the problem and a solution. The hibernate.cfg.xml file was being included as a data file and spec’d out in the manifest like this:

<file name="hibernate.cfg.xml" size="604" writeableType="applicationData">

<hash>

<dsig:Transforms>

<dsig:Transform Algorithm="urn:schemas-microsoft-com:HashTransforms.Identity" />

</dsig:Transforms>

<dsig:DigestMethod Algorithm="http://www.w3.org/2000/09/xmldsig#sha1" />

<dsig:DigestValue>eICryxpUlz1ZHRpxHt+P2z8kBJo=</dsig:DigestValue>

</hash>

</file>

Changing it from “Data File” to “Include” solved the problem.

In the working version of the manifest file, the NHibernate config file is spec’d out as this:

<file name="hibernate.cfg.xml" size="604">

<hash>

<dsig:Transforms>

<dsig:Transform Algorithm="urn:schemas-microsoft-com:HashTransforms.Identity" />

</dsig:Transforms>

<dsig:DigestMethod Algorithm="http://www.w3.org/2000/09/xmldsig#sha1" />

<dsig:DigestValue>eICryxpUlz1ZHRpxHt+P2z8kBJo=</dsig:DigestValue>

</hash>

</file>

Note the difference in the file declaration. The non-working one includes an attribute: writeableType="applicationData"

This is pretty obscure and maybe I’m just a simple guy, but telling me that you can’t find NHibernate.dll when you were really looking for a different isn’t very intuitive. Yes, I checked the stack trace, thinking that maybe the config file wasn’t there and it was an NHibernate exception being thrown or gobbled up, no such luck. The error was being reported out of PresentationHost.exe long before NHibernate was even being called.

Don’t ask me why changing the Publish Status from Data File to Include fixes the issue, I just work here.

Update: Sure enough, after you publish something on the internet along comes the information you were looking for. Buried somewhere on the web I found this tidbit:

If you set the 'Publish Status' of the xml data file to "Data File", this file will reside in the Data directory in the end user's Local Settings folder after installation. If you set the 'Publish Status' of the xml data file to "Include", the file will be output to the Application directory.

For xbap applications, the "Application directory" is the IE cache but when the Publish Status was set to "Data File" rather than "Include" it was going to nowhere land.

The SharePoint world is one less today.

It's with sad news that I have to say but Patrick Tisseghem, a SharePoint MVP that I've known for years, suddenly passed away on Wednesday September 3. He died due to heart failure in Gothenburg, Sweden.

I only met Patrick once at the first Summit I attended but he was an awesome and always interesting character. He was a talented guy and was always telling us about his beer trips throughout Europe while he was delivering SharePoint training.

He was a wonderful teacher, diligent author, and great guy to hang out with and talk to. He will be sorely missed.

Patrick is survived by his wife and two daughters. Our thoughts and prayers are with them.

We're starting a new project and naturally we looked at leveraging the latest .NET framework features (auto properties, extension methods, lamdas, LINQ, etc.). The question of user interface came up and we had some decisions to make.

This specific project we looked at building one client web front-end (for the majority of the users) and a SmartClient for a smaller contigent that requires a little more real-time feel along with more rich features the web client might not be able to do (without a lot of heavy lifting). As we were looking to start things, the notion of WPF vs. WinForms came up. This led us down a path to look at Prism (the Composite WPF project from the Patterns and Practices group) which has a lot to offer in frameworks and being able to do more with WPF (Prism is WPF only). WPF also has some benefits to automation (there are some new products that are tying into the automation frameworks) and testing. The pretty UI is just an afterthought and not the focus since we're building what would be considered a "traditional" business application (forms and maybe grids and reports).

WPF also has the advantage that we could deliver the application using xbap which kicks ClickOnce up a notch. The Prism framework is based on modules loading into XAML regions so we were even looking at re-using XAML user controls and plugging them into different clients.

This has kicked off a bit of a controversy and discussion. Is WPF really suited to business applications? Of course the answer is (as always) it depends. If you're business application requires a rich UI and a visual paradigm, then the obvious answer is yes. It's more intuitive for a user to drag a part from one component to another (say in a room designer) than to pick items from a grid and enter values in a form.